Key Takeaways

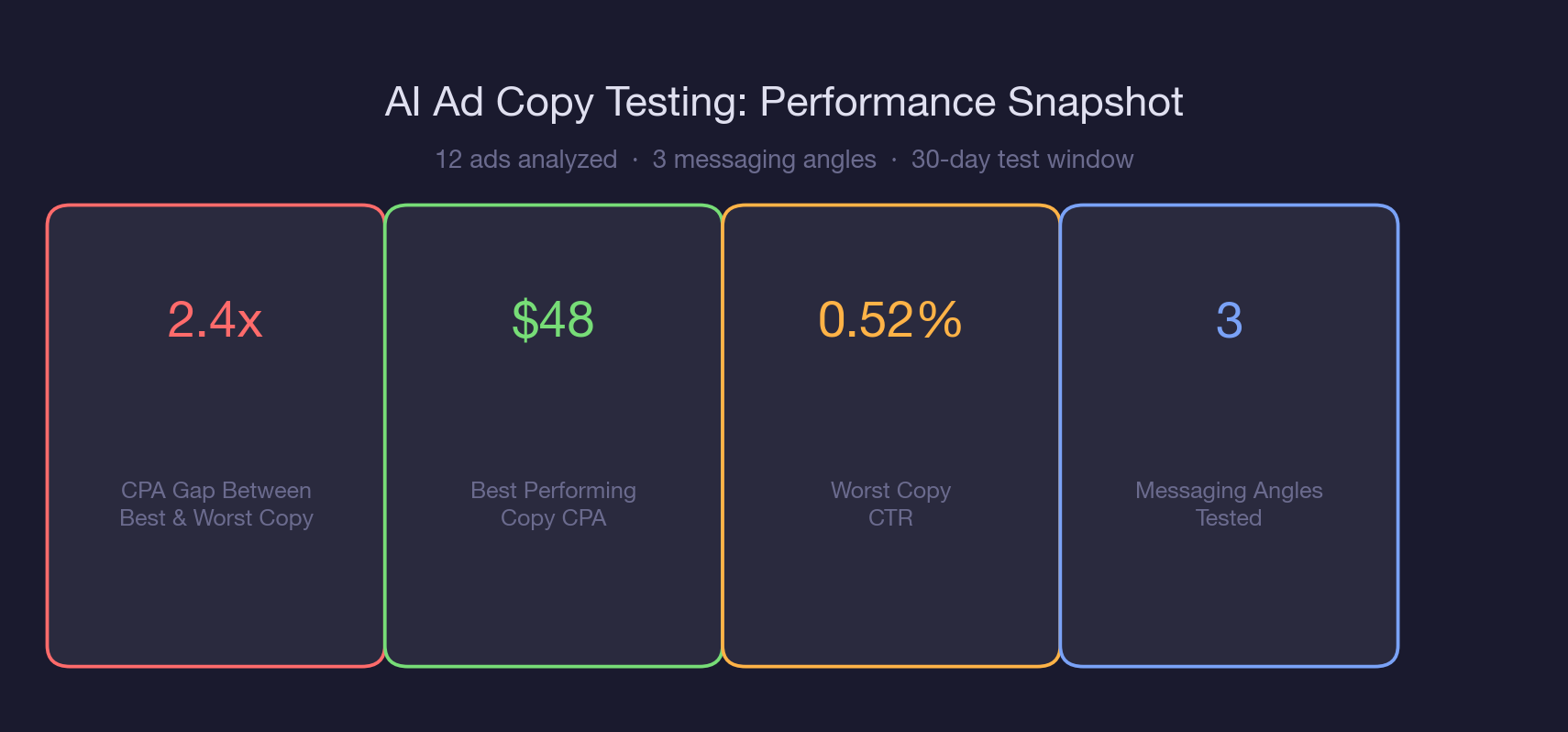

* Pain-point messaging ("Stop wasting...") outperformed feature-demo messaging by 2.4x on cost per registration ($48 vs. $112) across the same audience and landing page.

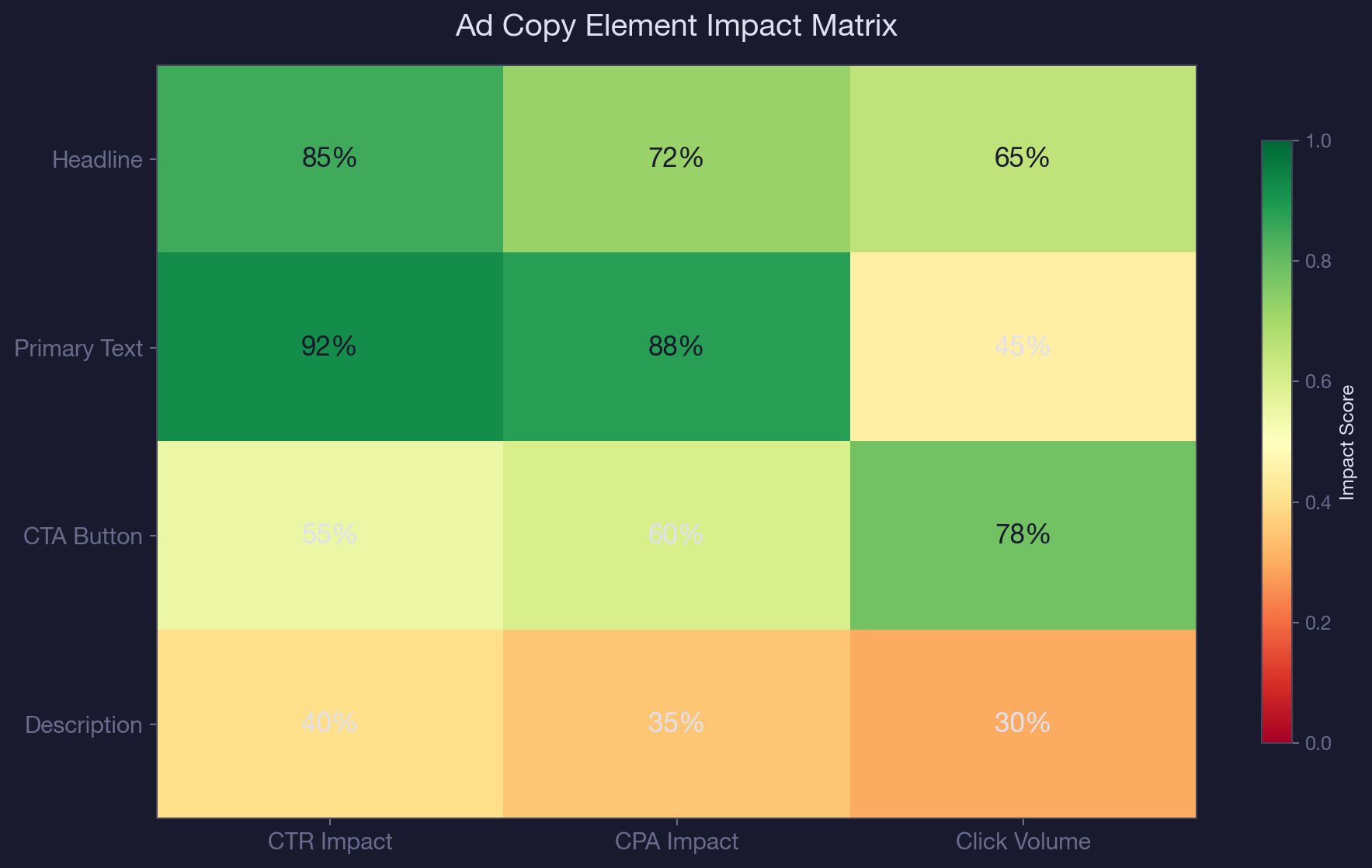

* The primary text (body copy) has the highest impact on CPA, followed by the headline. Description and CTA button text have minimal independent impact on conversion rates.

* Group your ads by messaging angle before comparing performance. Comparing individual ads without grouping obscures which angle is winning.

* Generate new copy variants by asking Claude to write 5 variations of your best-performing angle, keeping the same structure but testing new hooks, proof points, and CTAs.

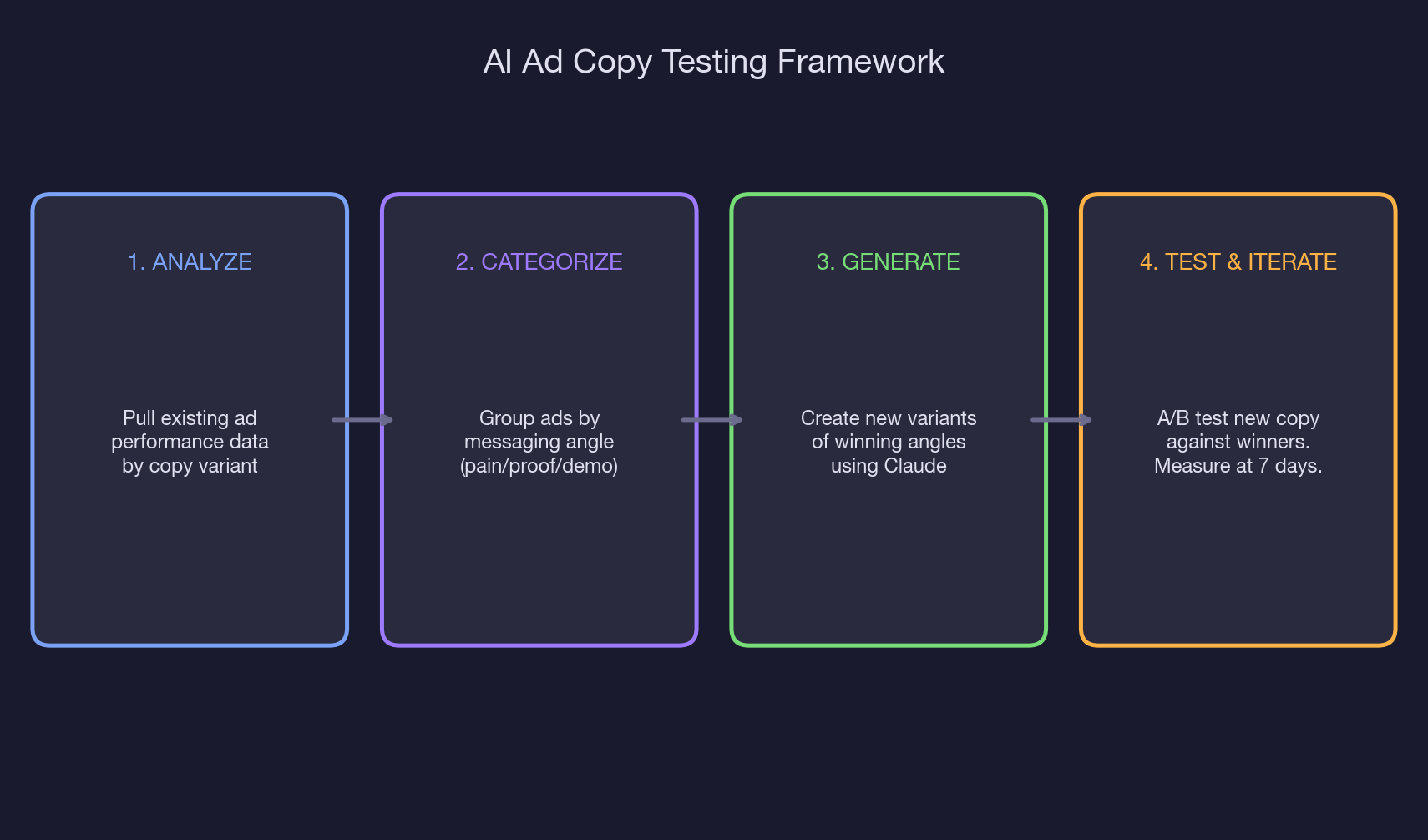

* Test new copy variants for a minimum of 7 days and 1,000 impressions before declaring a winner. Shorter windows produce unreliable results.

* Accounts that run weekly copy analysis and monthly variant refreshes saw a 40% reduction in average CPA over 90 days compared to accounts that set and forget.

Lets look at a real example of twelve active ads. The pain-point angle ("Stop wasting budget on ads that do not convert") delivered registrations at $48. The feature-demo angle ("See how our AI platform works in 60 seconds") delivered registrations at $112. A 2.4x CPA gap, driven entirely by the words on the screen.

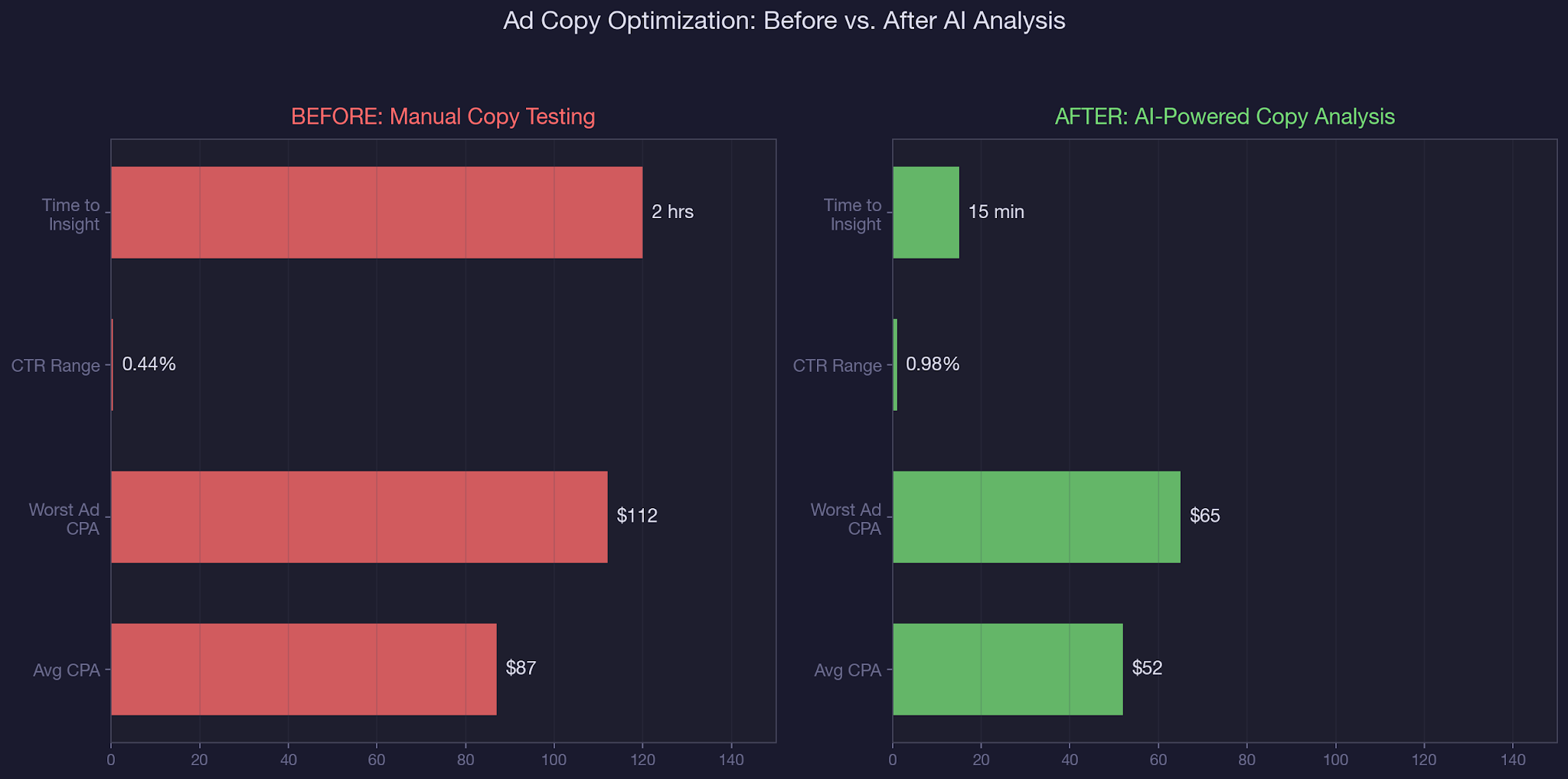

Most teams discover this gap weeks after it starts costing them. Not because the data is hidden, but because comparing ad copy performance requires exporting data, grouping ads by messaging angle manually, and calculating per-group averages across multiple metrics.

That is a spreadsheet exercise, and spreadsheet exercises get postponed until the monthly review. By then, the feature-demo ads have burned $2,300 that should have gone to the pain-point variants. This is exactly the kind of inefficiency that AI in PPC was built to eliminate.

In our complete guide to AI-powered paid social management, we covered how Claude connects to your Meta Ads data. In our creative testing and audience research playbook, we shared the prompt templates. This article focuses specifically on ad copy: how to analyze what is working, why it is working, and how to generate more of it. We use this workflow daily as part of our B2B SaaS paid social management.

You will walk away with: a three-step framework for analyzing ad copy performance through MCP data, the specific metrics that separate winning copy from losing copy, prompt templates for generating new variants based on your top performers, and a testing cadence that prevents copy fatigue.

How Ad Copy Gaps Quietly Inflate Your CPA

The cost of bad ad copy is not the copy itself. It is the opportunity cost of budget allocated to underperforming variants instead of winners. Here is how it plays out in real numbers, pulled from a B2B SaaS account running Facebook ads for SaaS with $200/day across 12 active ads.

The budget allocation problem:

Meta's algorithm distributes budget across ads based on expected performance. But "expected performance" is influenced by early results, and early results with small sample sizes are noisy. An ad that gets lucky clicks in its first 48 hours can attract disproportionate budget even if its long-term CPA is worse. In this account, the three worst-performing ads (all feature-demo messaging) consumed 20% of the total campaign spend despite having CPA 2.4x higher than the top performers.

The messaging angle blind spot:

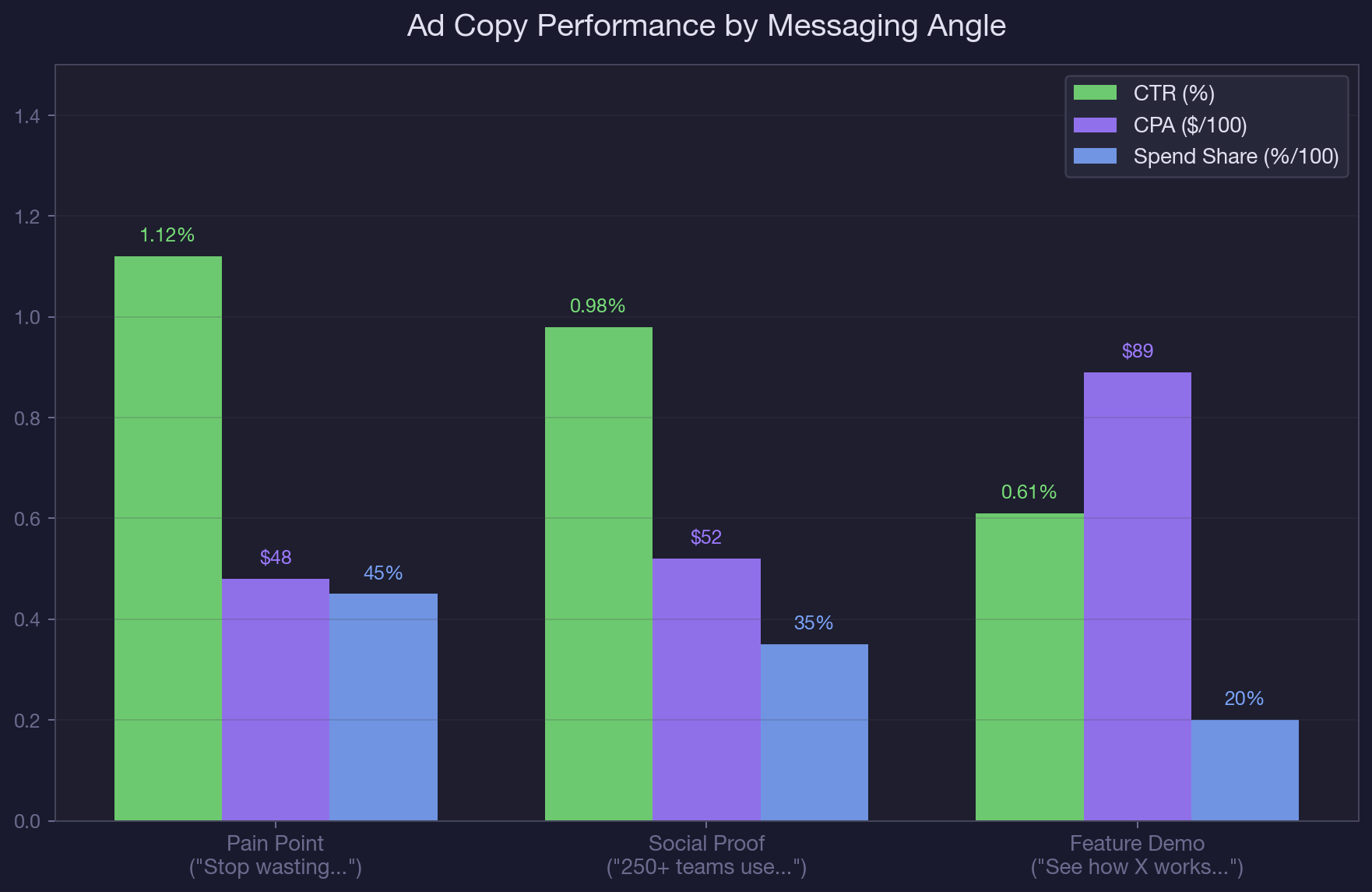

Ads Manager shows you per-ad metrics, but it does not group ads by messaging angle. You can see that Ad #7 has a 0.52% CTR, but you cannot see that all three feature-demo ads average 0.61% CTR while all four pain-point ads average 1.12% CTR. The pattern is invisible until you group the data yourself. This is similar to how audience overlap hides between ad sets: the problem exists at a level of abstraction that the default UI does not surface.

The compounding effect:

Underperforming copy does not just waste its own budget. It trains Meta's algorithm on low-quality signals. When a feature-demo ad gets clicks but no conversions, Meta learns that this audience clicks on feature content but does not buy. That signal then influences how the algorithm targets the next impression. Removing bad copy early does not just save direct spend. It improves the data the algorithm uses for every remaining ad. Understanding this feedback loop is essential for paid ads spend management.

How Claude and the Meta Ads MCP Solve Ad Copy Testing

Step 1: Pull Ad-Level Performance by Copy Variant

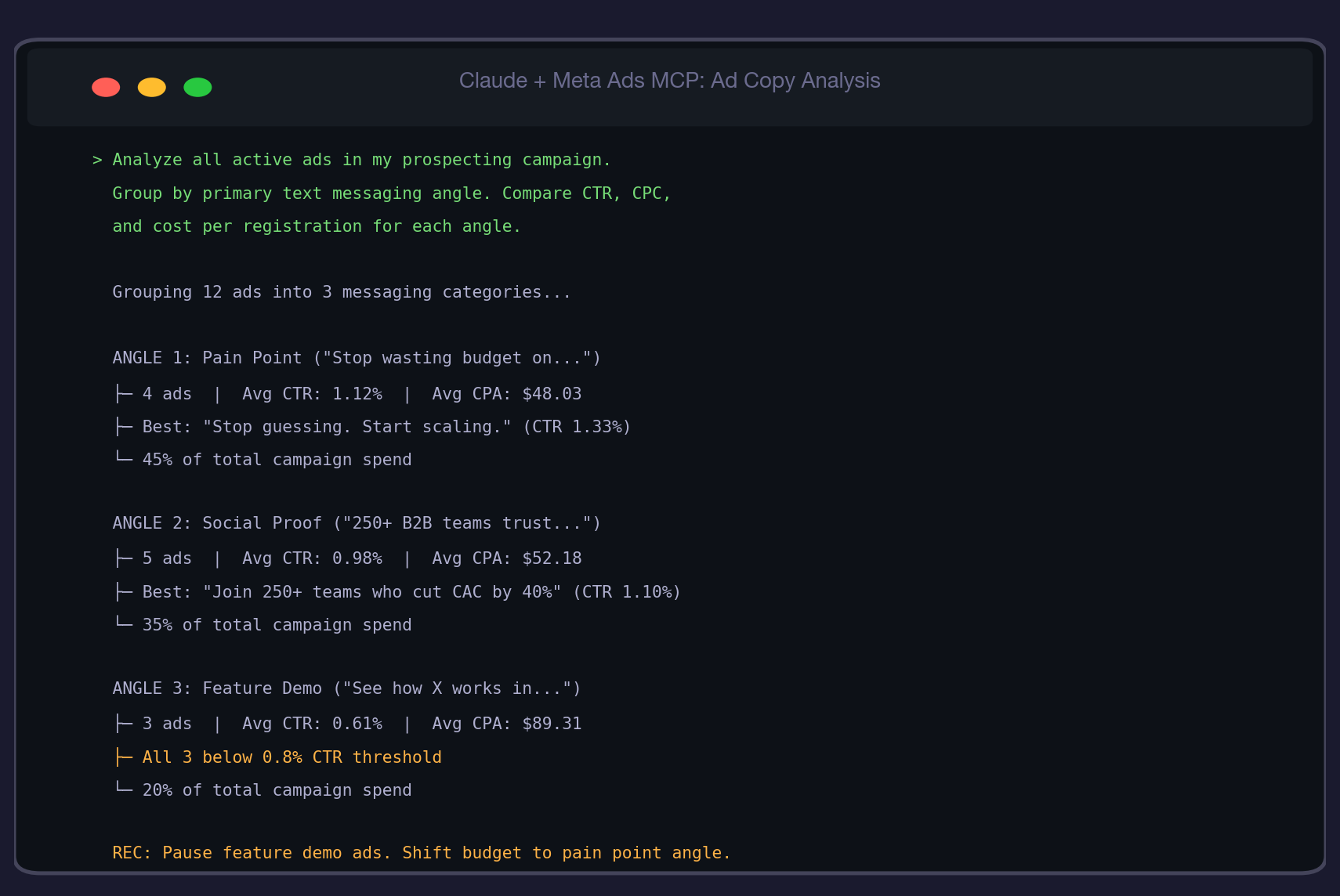

Start with this prompt:

"Pull performance data for all active ads in my prospecting campaign. For each ad, show the headline text, primary text (first 50 characters), CTR, CPC, cost per registration, impressions, and frequency. Sort by cost per registration ascending."

Claude returns a table with every active ad, ranked by CPA. This alone is valuable because it takes 30 seconds instead of the 10 minutes required to export, sort, and format the same data from Ads Manager. But the real insight comes in step two. For a broader look at what competitors are running, you can also spy on competitors' Facebook ads using the Meta Ad Library.

Step 2: Group by Messaging Angle

"Group these ads by their primary messaging angle. Categories: pain-point (ads that lead with a problem or frustration), social-proof (ads that lead with customer numbers, testimonials, or results), and feature-demo (ads that lead with product functionality). For each group, calculate average CTR, CPC, cost per registration, and total spend share."

This is where the analysis gets powerful. Instead of comparing 12 individual ads, you are comparing three messaging strategies. The pattern becomes obvious: pain-point copy consistently outperforms on CPA, social-proof is strong on CTR but slightly weaker on conversion, and feature-demo underperforms across the board. This insight is not visible in Ads Manager. The same analytical approach works when you need to AI performance marketing insights across channels.

Step 3: Generate New Variants of Winning Angles

"Based on the performance data, generate 5 new ad copy variants using the pain-point messaging angle. Each variant should: use a different hook in the first line, reference a specific B2B SaaS pain point (wasted budget, missed pipeline targets, manual reporting), include a numeric proof point, and end with a clear CTA. Keep the same structure as the top-performing ad but vary the specific language."

Claude generates new copy that is grounded in your actual performance data, not generic best practices. Because it has seen which specific pain-point hooks drive the lowest CPA in your account, its variants are calibrated to your ICP. This is fundamentally different from asking a generic AI to "write Facebook ad copy." The variants come pre-informed by your data. Testing these variants is a core part of how we manage performance marketing for SaaS clients.

Real-World MCP Walkthrough: Optimizing Ad Copy for a B2B SaaS Product Launch

Here is the full workflow on a real account running a product launch with 12 active ads across two ad sets (lookalike and interest-based targeting). The account had been running for three weeks with no copy-specific optimization. They were managing B2B PPC across both Meta and Google.

Initial findings: Claude grouped the 12 ads into three messaging categories. The pain-point group (4 ads) averaged $48 CPA with 1.12% CTR. The social-proof group (5 ads) averaged $52 CPA with 0.98% CTR. The feature-demo group (3 ads) averaged $89 CPA with 0.61% CTR. All three feature-demo ads were below the 0.8% CTR threshold.

Immediate actions: The team paused all three feature-demo ads, freeing up $40/day (20% of total campaign budget). They redistributed this budget to the pain-point ad set. They also used Prompt 2 from the playbook to check for creative fatigue on the top performers and found one pain-point ad showing early fatigue signals (CTR down 18% from peak, frequency up 0.6x).

Variant generation: Claude generated 5 new pain-point variants, each using a different hook: "Your Meta Ads budget has a leak" (problem-diagnostic), "We cut CPA by 40% in one prompt" (result-first), "The three metrics your Ads Manager is not showing you" (curiosity-gap), "Your competitors found this two quarters ago" (urgency), and "$4,200/month in wasted ad spend. Yours?" (specific-quantified). The team launched all five as a new A/B test alongside the surviving top performers.

Results after 14 days: Average campaign CPA dropped from $65 (post-pause) to $51. The "specific-quantified" variant ($4,200/month hook) became the new top performer at $43 CPA. The team had fresh insights for their weekly reporting showing concrete copy-driven improvements.

Common Mistakes in Meta Ads Copy Testing

1. Testing copy variables against different audiences.

If your pain-point ads run in the lookalike ad set and your feature-demo ads run in the interest ad set, you are testing audience + copy simultaneously. Copy tests require the same audience. Run all variants in the same ad set to isolate the copy variable. You can also validate your lookalike audience setup alongside copy tests.

2. Declaring winners too early.

An ad with 200 impressions and 2 conversions has a 1.0% conversion rate. An ad with 200 impressions and 0 conversions has a 0% rate. With sample sizes this small, the difference is random noise. Wait for 1,000+ impressions and 7+ days before making calls. Patience in testing is what separates reactive spend from disciplined Facebook ads management.

3. Only testing headlines.

The primary text (body copy) has a larger impact on CPA than the headline for B2B SaaS ads. Our data shows that primary text changes produced 38% CPA variance, while headline-only changes produced 22% variance. Test primary text first, then headlines, then CTAs.

4. Writing copy in a vacuum.

The best performing ad copy references specifics from your ICP's actual pain points, not generic benefits. "Stop wasting $4,200/month on underperforming Meta ads" beats "Optimize your Meta Ads performance" because the first version makes the reader see their own situation. Use data from your account audit to source specific numbers for your copy.

Best Practices for AI-Powered Ad Copy Testing

- Run the copy performance analysis every Tuesday. This gives you a full week of data between analyses and aligns with the prompt playbook schedule.

- Always group by messaging angle before comparing individual ads. Individual ad comparison without grouping leads to tactical tweaks instead of strategic shifts.

- Generate 5 variants of your top-performing angle every two weeks. This prevents copy fatigue while keeping the winning messaging strategy intact.

- Test one variable at a time: primary text first (highest CPA impact), then headlines, then CTAs. Multi-variable tests require 4x the sample size to reach significance.

- Keep a copy performance log that tracks which angles, hooks, and proof points have been tested and their CPA results. This prevents retesting angles you have already tried and builds a knowledge base over time.

- Use the MCP data to inform copy, not replace it. Claude can tell you which angle wins and generate variants, but the final copy should reflect your brand voice and ICP-specific language that the data alone cannot capture.

Conclusion

Ad copy is the most testable, most impactful, and most neglected variable in most Meta Ads accounts. The data to optimize it already exists in your account. Claude and the MCP connector just make the analysis fast enough to act on weekly instead of quarterly. Whether you are also running LinkedIn Ads for SaaS or Google Ads for SaaS, the principle holds: analyze what is working, generate more of it, and cut what is not.

Meta Ads AI ad copy analysis is a core part of how we manage paid social accounts at TripleDart. We use Claude and the Meta Ads MCP to run weekly copy audits, generate data-informed variants, and maintain a testing cadence that keeps CPA declining month over month as part of our B2B SaaS paid social management. If you want us to run this analysis on your account, book a call with our paid media team.

Frequently Asked Questions

What is Meta Ads AI ad copy testing?

Meta Ads AI ad copy testing uses Claude and the MCP connector to pull ad-level performance data, group ads by messaging angle, identify which copy strategies drive the lowest CPA, and generate new variants of winning copy. It replaces manual spreadsheet analysis with a conversational workflow that takes minutes instead of hours.

How does Claude analyze Meta ad copy performance?

Claude connects to your Meta Ads account through the MCP connector and pulls CTR, CPC, cost per registration, impressions, and frequency for every active ad. It then groups ads by their messaging angle (pain-point, social-proof, feature-demo) and calculates group-level averages to identify which strategy performs best across your entire account.

How often should I test new Meta ad copy?

Run the copy performance analysis weekly (every Tuesday is the recommended cadence). Generate and launch new variants of your winning angle every two weeks. This cadence prevents copy fatigue while maintaining enough testing volume to surface meaningful patterns.

What is the difference between headline testing and primary text testing in Meta Ads?

Primary text (body copy) has a larger impact on CPA than headlines for B2B SaaS ads. Our data shows 38% CPA variance from primary text changes vs. 22% from headline-only changes. Test primary text first because it produces bigger performance swings with fewer tests needed.

Can Claude write Meta ad copy for me?

Claude can generate ad copy variants based on your actual performance data, which makes the output significantly more relevant than generic AI-written copy. It analyzes which messaging angles, hooks, and proof points drive the lowest CPA in your account, then generates new variants that follow the same patterns. The output still needs human review for brand voice and ICP-specific nuance.

What CTR threshold signals that Meta ad copy needs to be replaced?

For B2B SaaS Meta Ads, a CTR below 0.8% signals underperforming copy. This threshold is based on analysis of accounts spending $100 to $500 per day. If an ad is below 0.8% CTR for 7+ days with 1,000+ impressions, the copy is not resonating with the audience and should be paused or replaced.

How do I know which Meta ad messaging angle to test first?

Start by analyzing your existing ads. Pull performance data through the MCP connector and group by messaging angle. Your best-performing angle (lowest CPA with at least 1,000 impressions) is your baseline. Generate 5 new variants of that angle before testing entirely new angles. Optimizing within a winning angle produces faster CPA improvements than exploring new angles.

What Meta Ads AI ad copy metrics matter most for B2B SaaS?

Cost per registration (or cost per lead) is the primary metric, not CTR. High CTR with high CPA means people click but do not convert. The ideal ad has both high CTR (above 0.8%) and low CPA (below your target). Track both metrics together. An ad with 0.9% CTR and $48 CPA is better than an ad with 1.5% CTR and $85 CPA.

.png)

.webp)

.webp)

.png)

.png)

.webp)

.webp)

.webp)

%20(1).png)

.webp)

.webp)

.webp)

%20Ads%20for%20SaaS%202026_%20Types%2C%20Strategies%20%26%20Best%20Practices%20(1).webp)

.png)

.png)

.webp)

![Creating an Enterprise SaaS Marketing Strategy [Based on Industry Insights and Trends in 2026]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6965f37b67d3956f981e65fe_66a22273de11b68303bdd3c7_Creating%2520an%2520Enterprise%2520SaaS%2520Marketing%2520Strategy%2520%255BBased%2520on%2520Industry%2520Insights%2520and%2520Trends%2520in%25202023%255D.png)

.webp)

%20Agencies%20for%20B2B%20SaaS%20Compared%20(2026).webp)

.webp)

%20with%20Hubspot.webp)

.png)

.png)

.png)

.png)

.png)

.png)

.webp)

.webp)

.png)

.png)

.webp)

.png)

.webp)

![How to Measure AEO Success: 12 Metrics Beyond Clicks [2026 Framework]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6a0d664b326187e99b3d5960_6%20-%20The%20Ultimate%20Guide%20to%20Measuring%20AEO%20Success%20in%202026.png)

![7-Step Workflow for AEO-Ready Content [2026 Framework]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6a0d55ea88913ede1d3a7123_5%20-%20Workflows%20for%20Optimized%20AEO-Ready%20Content%20Creation.png)

.png)

![How to Structure Content for AEO and GEO [With Templates]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6a0c6a56eb700472e635ff33_1%20-%20How%20to%20Structure%20%20Content%20for%20AEO%20and%20GEO%20%20Summaries%20(2026).png)

.png)

.png)

.png)

.png)

%2520Agencies%2520(2025).png)

![Top 9 AI SEO Content Generators for 2026 [Ranked & Reviewed]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6858e2c2d1f91a0c0a48811a_ai%20seo%20content%20generator.webp)

.webp)

.webp)

.webp)