Key Takeaways

- Claude SEO is the practice of optimizing your brand so Anthropic's Claude cites, recommends, or mentions you when users ask relevant questions.

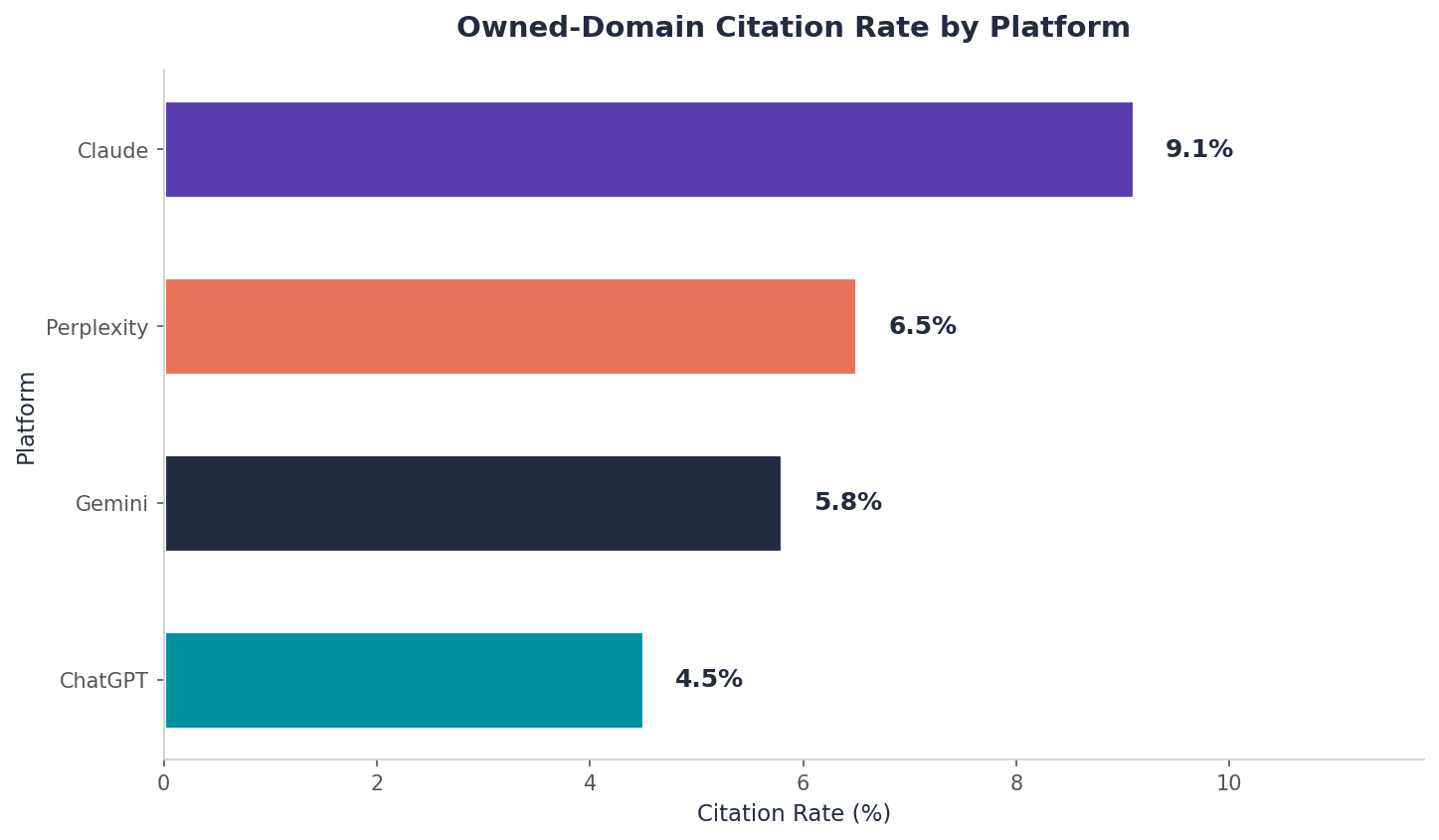

- Claude is the best AI platform for B2B SaaS visibility. It has the lowest "not mentioned" rate (54%) and the highest owned-domain citation rate (9.1%), double ChatGPT's 4.5%.

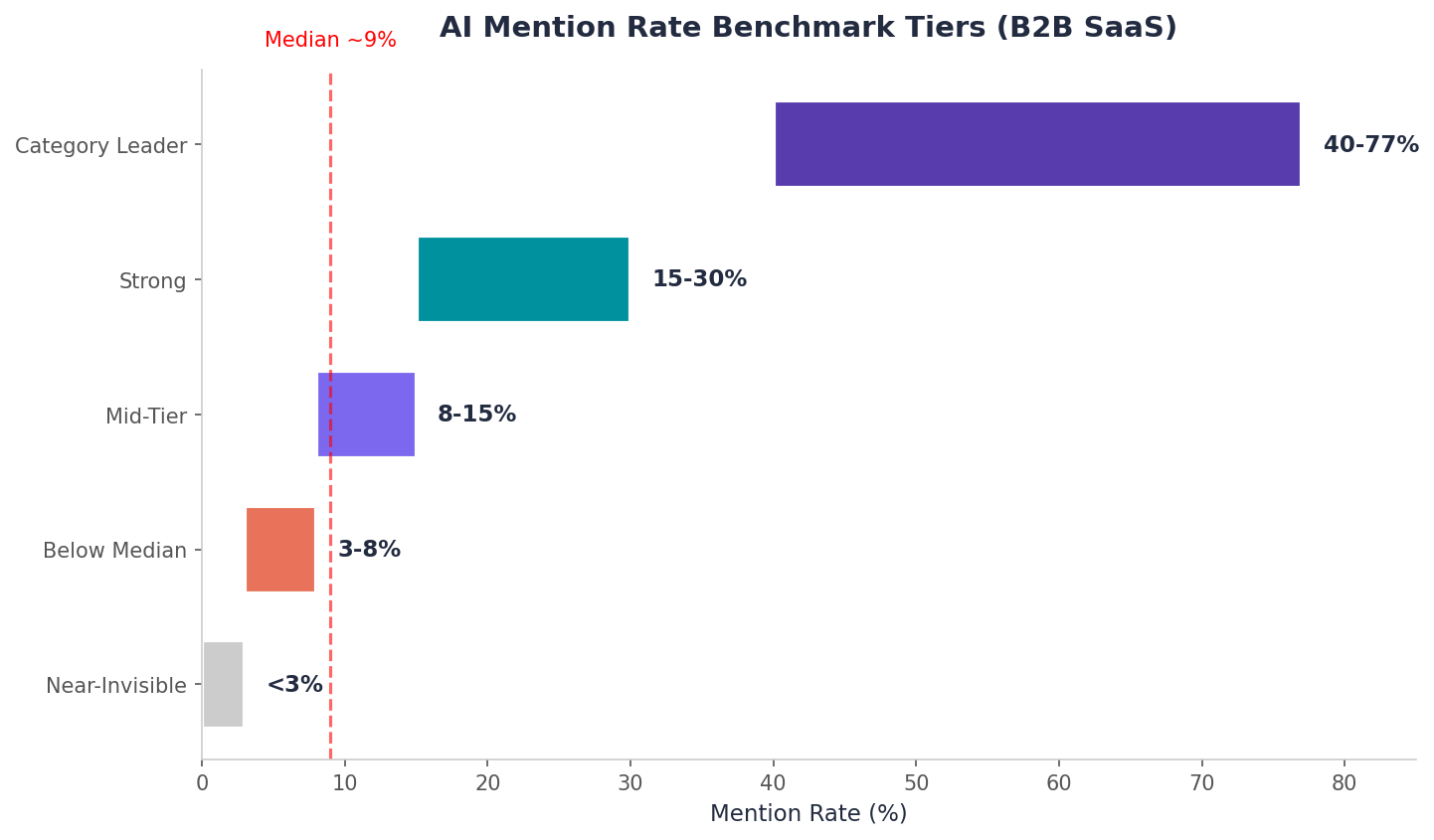

- Most brands are invisible. The median B2B SaaS mention rate is around 9%. If you are below that, you have a structural gap in your content or third-party presence.

- Third-party mentions matter more than backlinks. Brand mentions correlate 0.664 with AI visibility versus just 0.218 for backlinks. Reddit, YouTube, G2, and LinkedIn feed AI training data directly.

- Utility content outperforms blog posts by 6x to 30x. Tool pages, diagnostic guides, and pricing pages earn dramatically more citations than keyword-targeted articles.

- Competitor comparison queries deliver 2x to 3x higher mention rates. This is the single highest-leverage content type for B2B SaaS brands.

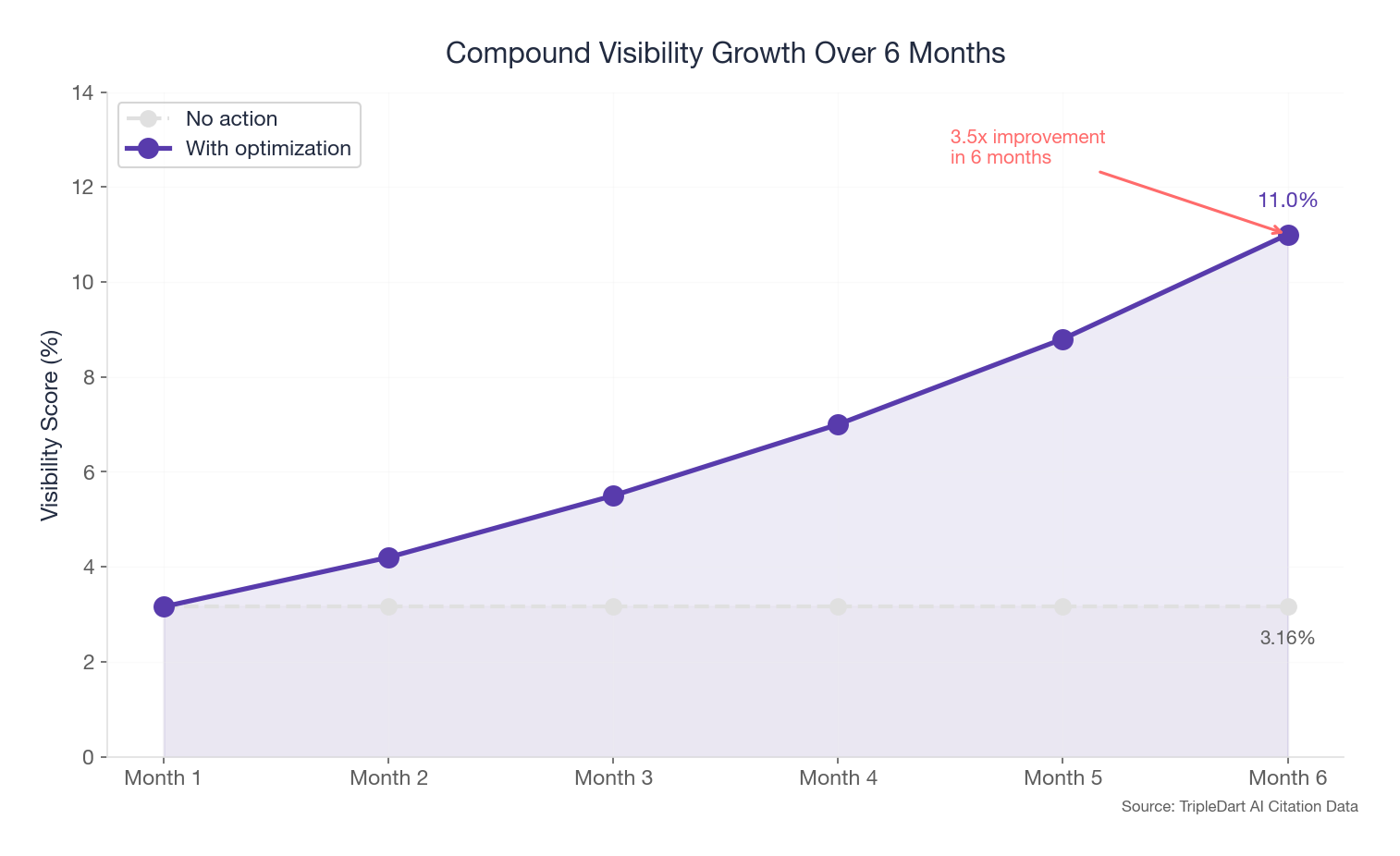

- AI visibility is volatile. One challenger brand peaked at 13.6% visibility and collapsed to 0.64% within two months. Sustained investment is required.

- AI-referred visitors convert 31% more than other traffic. This is not just a visibility play. It is a pipeline play.

Marketing leads spent two years building domain authority, publishing content, climbing Google rankings.

Then one morning, AI entered the scene.

You type your category into Claude. Three competitors get named. Your brand does not exist.

The buyer who just asked that question will never know you exist. And increasingly, that buyer is skipping Google entirely.

This is not a hypothetical scenario. It is happening right now across B2B SaaS.

The macro picture is stark:

- Gartner predicts traditional search volume will drop 25% by 2026 - this year - as AI assistants absorb discovery behavior

- AI-driven traffic to retail surged 4,700% year over year by mid-2025, according to Adobe Analytics

- When Google's AI Overviews appear in search results, organic click-through rates drop by 61%

- ChatGPT alone has reached 900 million weekly active users, creating an entirely new discovery channel that most B2B brands have not optimized for

The old playbook of ranking in the top ten and collecting clicks is eroding. A new one is forming.

Over 90 days, we monitored 185,000+ AI responses across six platforms: ChatGPT, Perplexity, Claude, Gemini, Google AI Mode, and Google AI Overview. That gave us 690,000+ citation events and 260,000+ sentiment mentions spanning dozens of B2B brands.

This guide is everything we learned about Claude SEO, distilled into a framework you can act on this quarter.

What this guide covers:

- What Claude SEO is and why it matters more than any other AI platform for B2B brands

- How Claude decides which brands to cite (three filters, in order)

- The seven levers that drive Claude visibility, prioritized by impact

- Benchmarks, brand case studies, and a platform-by-platform strategy you can execute immediately

AI platform behavior changes quickly. We recommend re-auditing your Claude visibility quarterly and checking for platform updates monthly.

Key Terms Used in This Guide

If you are new to AI visibility, here is a quick reference for the core terms used throughout this guide. Each term is defined in the context of how AI platforms like Claude surface and recommend brands.

AI Visibility: A measure of how often and how prominently your brand appears in AI-generated answers. Unlike traditional search rankings, AI visibility is binary at the query level: you are either named in the response or you are not. Visibility combines mention rate (how often you appear) with position (where in the answer you appear).

Mention Rate: The percentage of relevant queries where an AI platform names your brand. The median for B2B SaaS brands is around 9%. A mention rate below 5% means your brand is structurally invisible to AI.

Citation: When an AI platform references a specific URL from your website or a third-party source that discusses your brand. Citations can link to owned domains (your website) or third-party domains (G2, Reddit, industry publications). Owned-domain citations drive direct traffic; third-party citations build training-data authority.

Share of Voice: Your brand’s share of all brand mentions within your category across AI responses. If AI platforms mention five brands in your space and yours appears in 20% of those mentions, your share of voice is 20%.

Sentiment Score: The ratio of positive, neutral, and negative language AI models use when describing your brand. AI platforms reflect the sentiment found across their training data and retrieved sources. A brand with 65% positive sentiment and 10% negative sentiment is in a healthy range for B2B SaaS.

Training Data: The corpus of text, web content, and documents that an AI model was trained on before deployment. Content that was well-linked, widely referenced, and present across authoritative third-party sources during the training window carries outsized weight in AI responses.

Real-Time Retrieval: The process by which AI platforms search the live web to augment their pre-trained knowledge when answering a query. Claude uses retrieval to pull in current content, which is why freshly published utility pages and comparison content can earn citations even if they were not in the original training data.

Topical Authority: The depth and breadth of content a brand has published around a specific topic area. Brands with dense content clusters (500+ unique cited URLs) consistently outperform brands with thin coverage. AI models treat topical depth as a proxy for expertise.

Generative Engine Optimization (GEO): The practice of optimizing content to increase visibility in AI-generated responses. First formalized in a 2024 Princeton University study (Aggarwal et al., ACM KDD 2024), GEO encompasses strategies like adding statistics, citing authoritative sources, and structuring content for passage-level extraction. Claude SEO is a platform-specific application of GEO principles.

Methodology and Data Sources

How We Built This Data Set

- Brand citations analyzed: 690,000+

- Query scenarios monitored: 260,000+

- Platforms: Claude, ChatGPT, Gemini, Perplexity, Google AI Mode, Google AI Overview

- Monitoring period: 90 days (Q4 2025 – Q1 2026)

- Data collection: Slate, TripleDart's proprietary AI monitoring platform

A note on data interpretation: Observed data (citation rates, mention rates, sentiment scores) comes directly from our monitoring infrastructure. Inferences about causality (e.g., "brand mentions drive visibility") are based on correlation analysis cross-referenced with third-party research (Ahrefs, Princeton GEO study). We flag where we are drawing inferences versus reporting measurements.

What Is Claude SEO?

Most B2B brands do not exist in AI answers.

When a buyer asks Claude which tools to use, the typical brand shows up less than one time in ten. The few that do tend to dominate. There is no "page one" here. You are either in the answer or you are invisible.

Claude SEO is the practice of optimizing your brand so Anthropic's Claude cites, recommends, or mentions you when users ask relevant questions.

Traditional SEO gets you ranked in Google's blue links. Claude SEO gets you placed inside a synthesized answer where only a handful of brands get named.

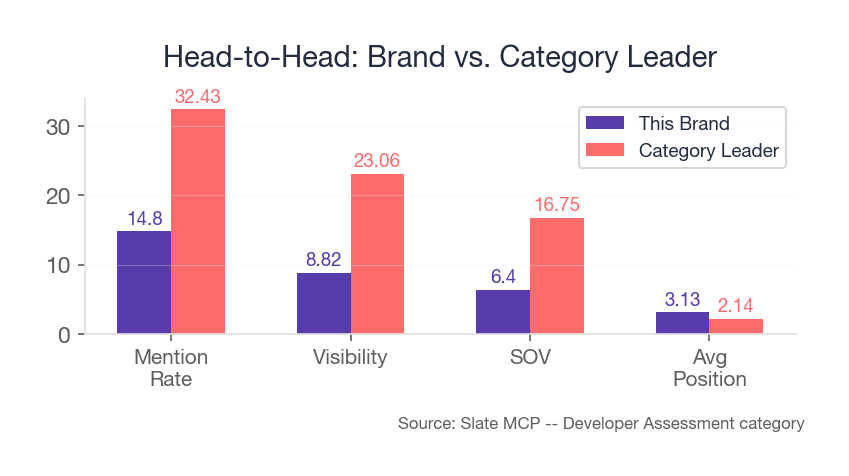

The gap between brands that invest in this and those that do not is enormous. A category-dominant brand we track shows up in over 77% of relevant AI queries. A billing platform in the same dataset shows up in zero.

Your buyers are already asking questions like:

- "What's the best payment gateway for Indian businesses?"

- "Which e-signature tools are HIPAA compliant?"

- "What are the best alternatives to [leading e-signature provider]?"

If your brand is not in the answer, you are not losing a click. You are losing the entire moment of buyer curiosity. That moment used to happen on Google. Increasingly, it happens inside an AI chat window.

The question is not whether AI answers are replacing search. It is which platform gives your brand the best shot at being seen.

Why Claude Stands Out Among AI Platforms

The platform your buyer opens matters more than most teams realize. The same brand, answering the same queries, can be 4x more visible on one platform than another.

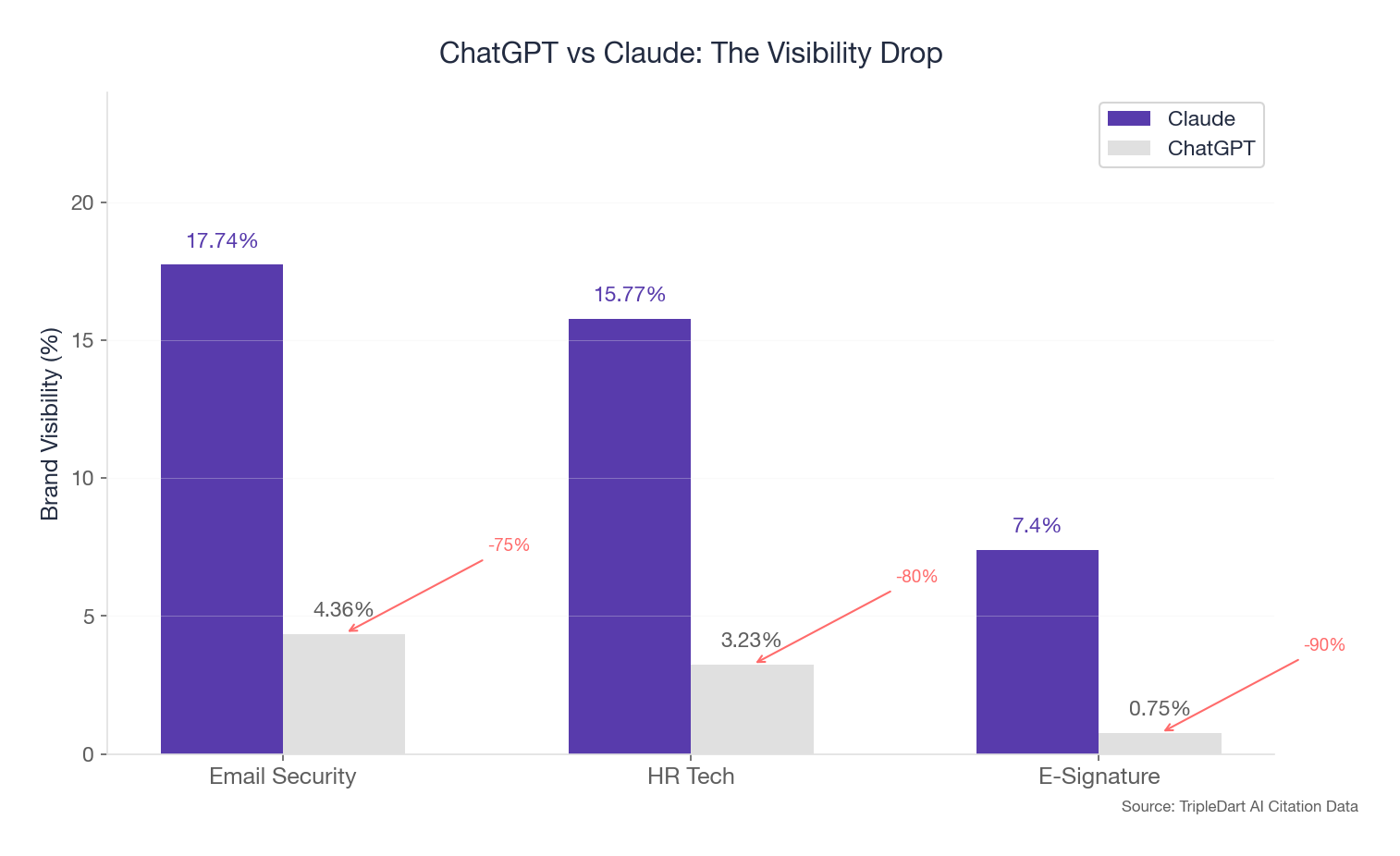

Take ChatGPT. Despite having the largest consumer user base, it consistently delivered the lowest brand visibility of any platform we tracked.

The pattern repeats across nearly every brand. If your AI search strategy is calibrated only to ChatGPT because it has the most users, you are optimizing for the wrong platform.

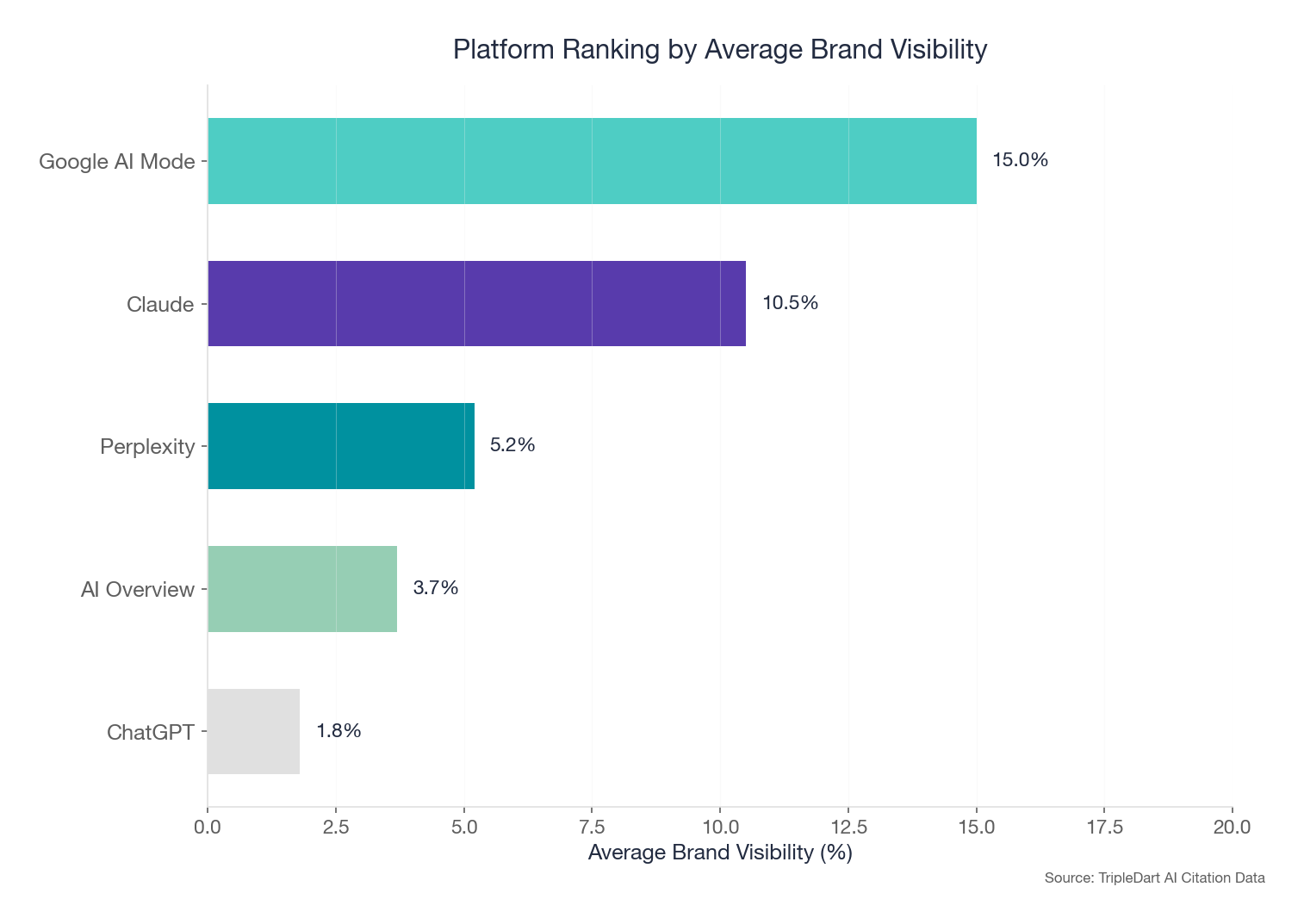

Claude's "not mentioned" rate of 54% is the lowest of any platform we track. Its pre-training vs. retrieval balance is also the most even. And its owned-domain citation rate is 9.1%, double ChatGPT's 4.5%. That means Claude is both more likely to name your brand and more likely to link directly to your website.

Source: TripleDart Slate monitoring, Q4 2025–Q1 2026. Observed data from 185,000+ monitored AI responses across six platforms.

Here is the pipeline argument: Adobe found that AI-referred visitors convert at a 31% higher rate than visitors from other channels during the 2025 holiday shopping season. These are not just eyeballs. They are higher-quality eyeballs. When an AI platform names your brand in a recommendation, the buyer arrives with pre-built trust.

Why this matters for B2B teams:

- Low competition: The median B2B SaaS mention rate is around 9%. Most brands are not trying yet. There is room to gain ground before the space gets crowded.

- High buyer intent: Users asking Claude are mid-funnel, evaluating tools. One email security brand hit 83.99% mention rate on competitor queries versus 26.42% overall. That is a 3.2x boost when the buyer is actively comparing options.

- No paid placement: You cannot buy your way in. Every citation in our dataset was earned.

- Compounding returns: Brands with authority see stable visibility over time. A leading fintech platform held between 48% and 62% visibility across 12 consecutive weeks.

- Enterprise audience: Claude's users skew toward knowledge workers and technical buyers. Its owned-domain citation rate being double ChatGPT's means more direct traffic to your site, not to aggregators.

But visibility is not guaranteed to hold.

One e-signature challenger peaked at 13.6% visibility in late January and cratered to 0.64% by late March. An email security brand saw visibility drop from roughly 25% to 10% as its query set expanded, revealing niche strength but category-wide weakness.

Claude SEO is not a set-it-and-forget-it channel. It requires ongoing investment.

Claude names brands more often and links to owned domains at double the rate of its closest competitor. But earning that citation requires understanding what Claude looks for.

How Claude Decides What to Cite

Claude checks three things, in this order. Miss any one and you are filtered out.

Think of it as a three-stage gate. Your brand has to clear each one to earn a citation.

Training data establishes whether Claude knows you exist. Real-time retrieval determines whether your content is useful enough to surface. Sentiment filters decide whether Claude trusts you enough to recommend.

Stage 1: Training Data

Claude's foundational knowledge comes from its training corpus. Content that was well-linked and widely referenced before the training cutoff carries outsized weight.

The distribution of citations is heavily skewed toward a handful of platforms. In the email security space, YouTube, Reddit, G2, Wikipedia, and GitHub dominated, with YouTube and Reddit each pulling over 1,500 citations.

For many brands we work with, the pattern was similar but at much larger scale: Reddit, LinkedIn, and YouTube were the top three third-party sources, each pulling thousands of citations.

If your brand is not showing up on Reddit threads, G2 comparison pages, YouTube walkthroughs, and LinkedIn discussions, you are missing the platforms that feed AI training data. No amount of on-site content can fully compensate.

Stage 2: Real-Time Retrieval

When Claude searches the web to augment its answers, utility content wins. Not blog posts. Useful tools and guides.

In one case, a cybersecurity brand's single diagnostic tool page earned 78 citations, outperforming what dozens of standard blog posts would generate. Error-fix guides matched near-homepage-level performance. A leading fintech platform's pricing page pulled over 2,600 citations, and a "payment success rate tips" post earned 381.

Claude retrieves what is most useful, not what is most recently published. A well-built tool page or a definitive troubleshooting guide will outperform dozens of keyword-targeted blog posts.

Stage 3: Sentiment Filters

Claude does not just count mentions. It reflects how your brand is perceived.

Across our dataset, average positive sentiment is roughly 65%, with a range from 50% to 77%. But sentiment is not uniform across themes.

Pricing transparency is the one theme where negative sentiment exceeded positive for multiple brands. AI models surface complaints aggressively when they exist in the source material.

Look at these potential worst case scenarios:

On the flip side, some brands generate near-universal positive sentiment. An email security brand's safety/security mentions were 98.3% positive. A leading fintech platform's brand perception ran 99.5% positive.

The bottom line: audit what AI says about your brand's pricing and support before optimizing for more mentions. More visibility with negative sentiment is worse than less visibility with positive framing.

Sentiment by Theme: What AI Models Say About Brands (Across 5 Categories)

AI does not just mention your brand. It evaluates you, theme by theme. Here is how sentiment distributes across some common themes in our monitoring data.

Pricing is the most consistently negative theme across all categories. Pricing transparency is not just good practice. It is a measurable AI sentiment lever.

Brand perception content runs near 100% positive everywhere. These pages (about us, mission, founder stories) are built-in positive amplifiers that most teams neglect.

Customer support sentiment splits sharply. The professional services firm runs 99% positive. The logistics brand runs 88%. But when support sentiment turns negative (as we see with the email platform at 22% negative), AI models surface those complaints prominently.

Claude SEO vs. Google SEO

The difference between these two channels comes down to one thing: in Google, ranking sixth still earns impressions. In Claude, you are either named or you do not exist.

When the category-leading provider holds 33% visibility and a challenger manages under 2%, that is not a ranking difference. The challenger barely exists in the conversation. This winner-take-most dynamic makes Claude SEO higher-stakes per query, but also higher-reward for brands willing to invest.

In Perplexity, everyone gets named, but so do your competitors, with direct links to their domains. Perplexity generates 4.6x more citations than ChatGPT, but roughly 1 in 5 point to a competitor's domain.

Who Gets Cited

Google SEO rewards domain authority and backlinks. The top 10 share the page.

Claude SEO rewards content depth, named authorship, and brand consistency. Only 2 to 4 brands make the answer.

Where Mid-Market Brands Win

Google is hard-fought. Domain authority takes years.

Claude is the best platform for mid-market B2B, with 4x to 10x higher visibility than ChatGPT.

Perplexity is volume-friendly but competitive.

What Content Performs

Google rewards keyword-optimized, well-linked pages.

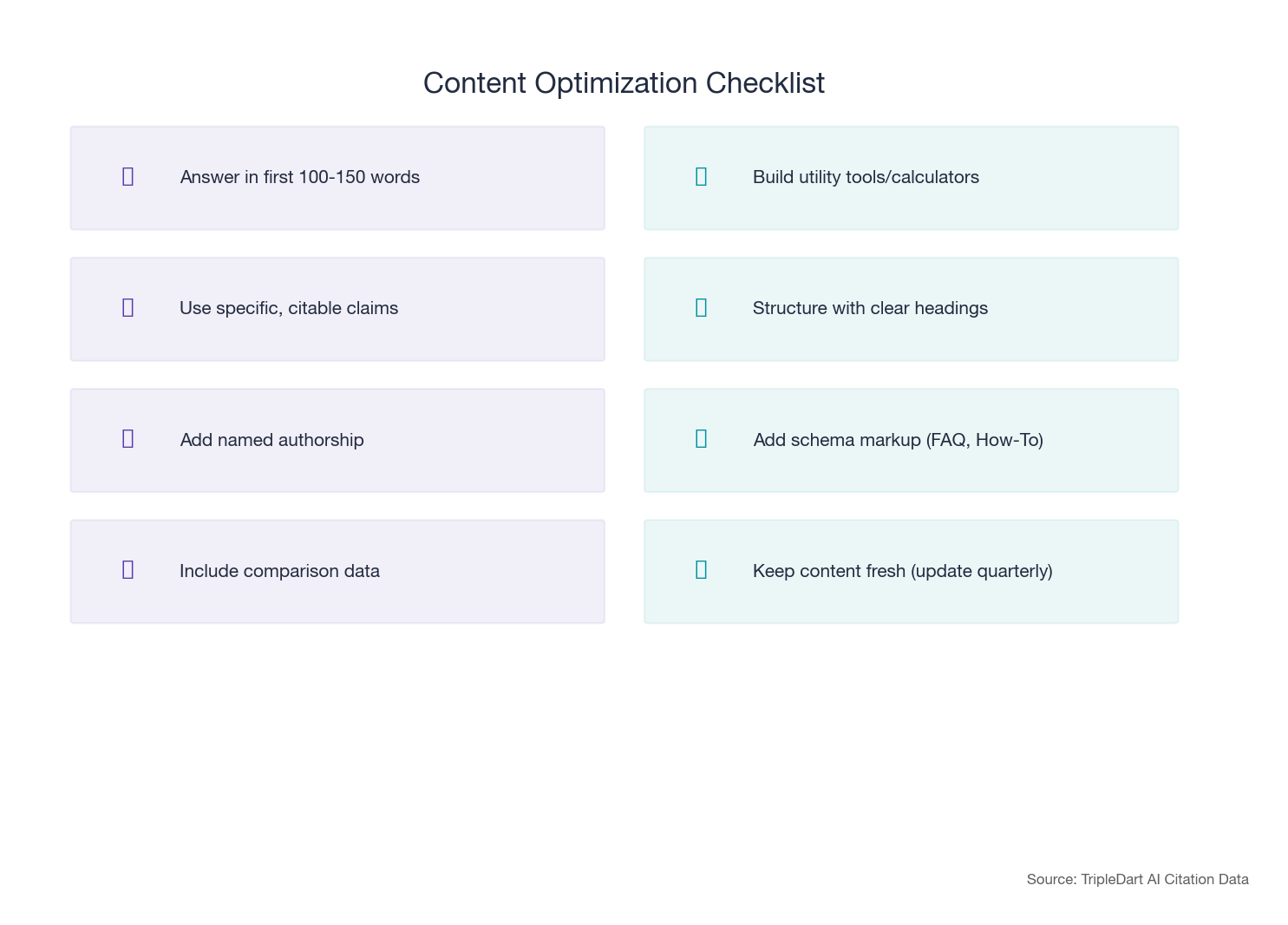

Claude rewards answer-first content with named experts and schema.

Perplexity disproportionately cites integration docs, API guides, and utility pages.

The practical takeaway: optimize for Claude first (highest quality citations), monitor Perplexity (highest volume, manage competitor co-citation), and maintain your Google SEO foundation (it feeds Google AI Mode).

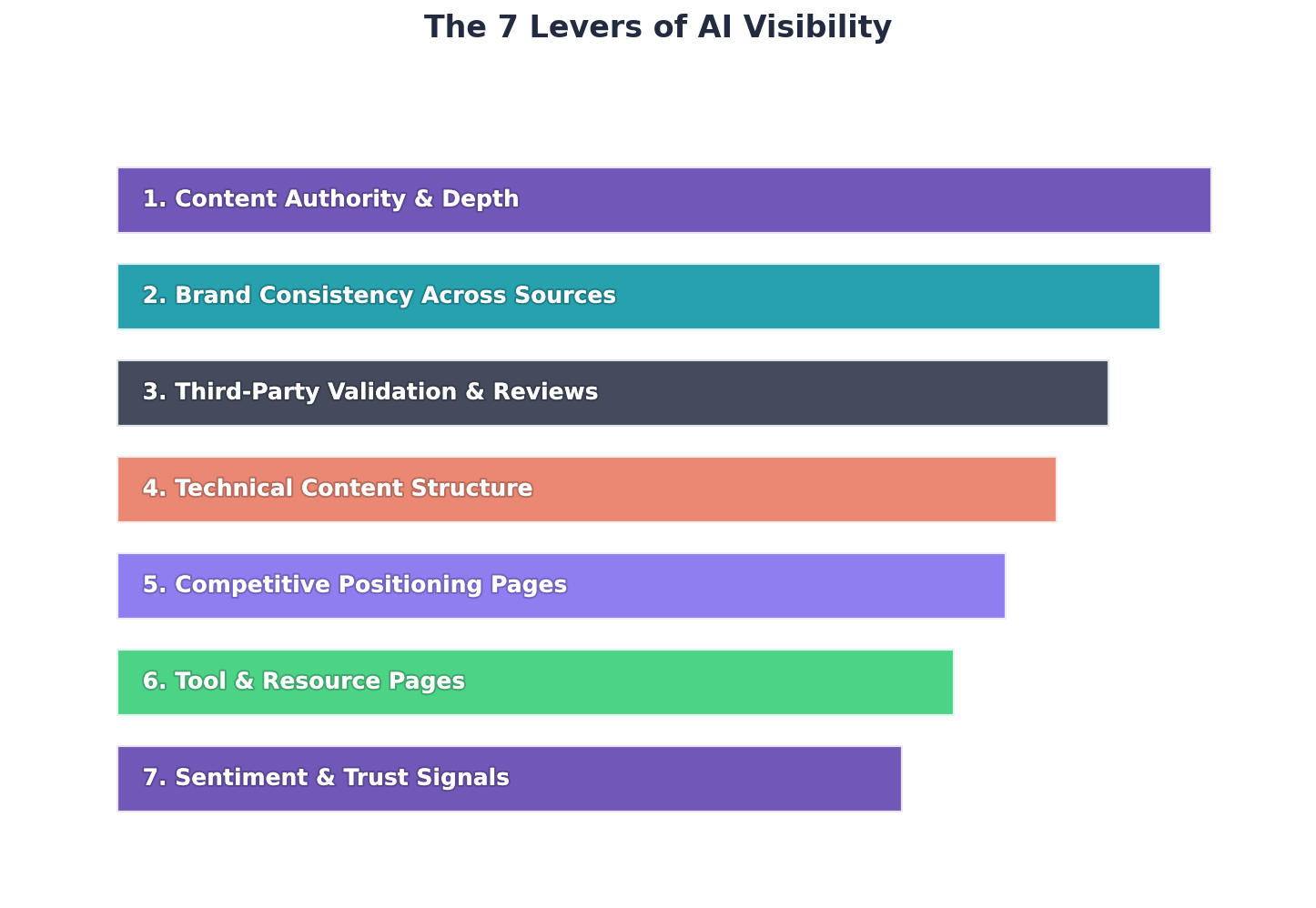

The 7 Levers That Drive Claude Visibility

These seven levers emerged from analyzing which content, brand signals, and structural factors correlate with higher mention rates. They are not a checklist to work through in isolation. Each one builds on the last, creating a compounding effect that separates visible brands from invisible ones.

We have ordered them by observed impact, from the foundation that everything else depends on to the multiplier that ties it all together.

Lever 1: Utility Content Over Blog Posts

This is the foundation. Without it, nothing else compounds.

Shallow, keyword-stuffed content does not get cited. The content that earns Claude's attention solves a real problem. When a user asks "how do I fix a DMARC error," Claude wants to point them to the page that resolves it, not the page that talks around it.

What the Citation Data Reveals

That is why a single diagnostic tool page for a client earned 100+ citations while dozens of standard blog posts earned around 10. A leading fintech platform's pricing page pulled over 2,600 citations because it answered the question buyers were actually asking.

Niche compliance content punches far above its weight too. A mid-market e-signature platform's HIPAA-compliant e-signature page earned citations across five of six platforms, despite being a narrow topic that probably ranks for fewer than 500 searches per month on Google.

What to do: Audit your content library for "utility assets." Tool pages, calculators, diagnostic guides, and compliance explainers. If you do not have a free tool in your category, build one. Tool pages earn 6x to 30x more citations than standard blog posts.

Once you have utility content that earns citations, the next question is: where else does your brand need to show up?

Want to find out how your utility content stacks up against what Claude actually cites? Book a free AI visibility audit with TripleDart and we will benchmark your content library against the citation patterns in our dataset.

Lever 2: Third-Party Mentions Are Non-Negotiable

Your owned content alone will not get you there. Claude draws heavily from third-party sources. And the data on this is now unambiguous.

An Ahrefs study of 75,000 brands found that brand mentions correlate 0.664 with AI visibility, compared to just 0.218 for backlinks . In traditional SEO, backlinks are the dominant signal. In AI visibility, third-party mentions matter three times more.

Reddit, YouTube, G2, and LinkedIn dominate the citation landscape across every category we studied. The brands with the highest mention rates have deep third-party footprints.

For example, one client with a 79% mention rate had over 34,000 owned citations from more than 5,000 unique URLs. A challenger brand with a 5% mention rate had just 521 owned citations from 138 URLs.

How to Build Your Third-Party Presence

The takeaway is not just "get more mentions." AI visibility compounds. The more places your brand appears with useful context, the more training data and retrieval sources feed your visibility.

What to do: Build a systematic third-party strategy.

Prioritize:

- Reddit: Respond to threads with genuine expertise

- YouTube: Publish technical walkthroughs

- G2: Collect reviews aggressively

- GitHub: Invest here if you have developer-facing products

These platforms feed AI training data directly.

Third-party mentions get you into the training data. But what Claude pulls from those mentions depends on how consistently your brand tells its story.

Monitoring Your Third-Party Footprint

Tracking where your brand appears across AI training sources requires dedicated tooling. The AI visibility monitoring space is maturing quickly, with several categories of tools available in 2026.

Dedicated AI citation trackers: Tools like Slate (TripleDart’s proprietary platform), Otterly.ai, and Semrush’s AI Visibility toolkit monitor your brand’s presence across ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews. These platforms send real queries to each AI platform and report back on mention frequency, citation URLs, sentiment, and share of voice over time.

Brand mention monitors: Platforms like SparkToro, Brand24, and Mention track where your brand name appears across Reddit, forums, review sites, and social media. These sources directly feed AI training data, so a gap in brand mentions often predicts a gap in AI visibility.

Review platform dashboards: G2, Capterra, and TrustRadius each provide dashboards showing your review velocity, category ranking, and competitor positioning. Since domains with profiles on platforms like G2 and Capterra have significantly higher chances of being cited by AI platforms, monitoring your standing on these sites is critical.

Set up monthly check-ins with your monitoring stack. Track mention rate trends, flag any sudden drops in third-party coverage, and compare your citation footprint against your top three competitors. The brands in our dataset with the highest mention rates all had systematic monitoring in place before they began optimizing.

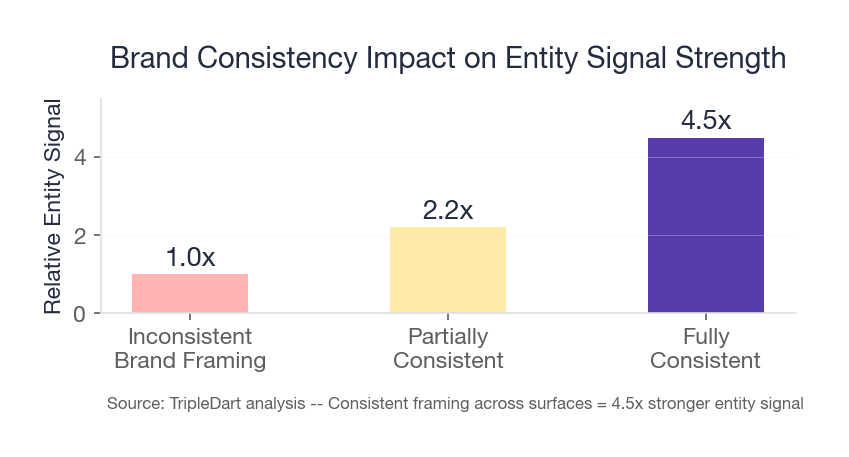

Lever 3: Consistent Brand Framing

How Claude describes your brand reflects how the internet describes your brand.

A leading fintech platform's brand perception ran 99.5% positive across more than 1,200 mentions on that theme. That consistency comes from a unified message across owned content, third-party coverage, and community mentions.

Contrast that with a challenger e-signature brand. Its positioning fragments: sometimes mobile-first, sometimes enterprise, sometimes "[competitor] alternative." Claude reflects that confusion. The compliance niche is where its framing is sharpest, and that is exactly where it performs best, jumping from a 5% mention rate overall to nearly 35% on compliance-specific queries.

What to do: Establish a canonical one-sentence brand descriptor and make sure it appears consistently across your website, G2 profile, Crunchbase listing, founder bios, and PR mentions.

BEFORE (fragmented framing — what Claude reflects back):

"[Brand] is a mobile-first e-signature tool. Some sources describe it as an enterprise document management platform. It's also mentioned as a DocuSign alternative in various reviews."

What went wrong: Three different narratives across owned content, G2, and review sites. Claude averages them into confusion.

AFTER (canonical framing — consistent across every surface):

"[Brand] is the e-signature platform built for compliance-first teams — HIPAA, SOC 2, and FERPA compliant out of the box." — This phrase appears identically on the homepage, G2 profile, Crunchbase, LinkedIn description, and press kit.

What changed: One sentence, repeated everywhere. Claude now consistently describes the brand using the compliance angle — which also happens to be the highest-intent query type.

Brand Framing: Before and After

Claude echoes whatever framing is most consistent across its sources. A model does not care how good your homepage is. It cares whether every third-party source it has ever read tells a coherent story about what you do.

Consistent framing builds the trust layer. The next lever makes that trust explicit.

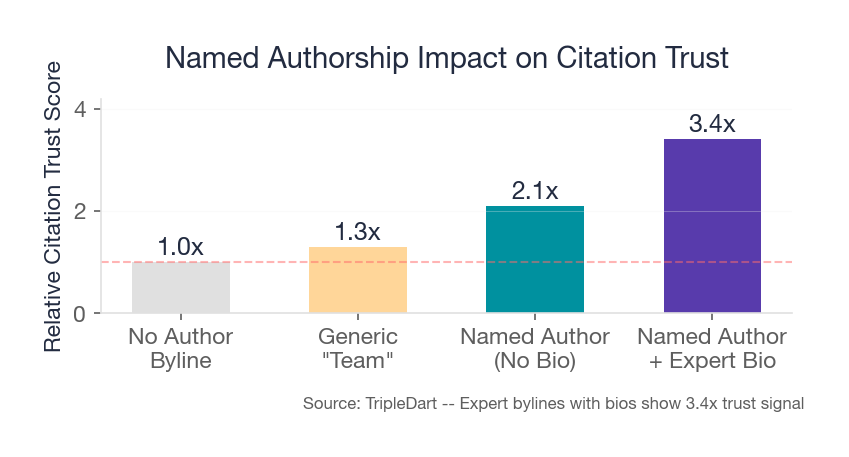

Lever 4: Named Authorship and Expert Credentials

Claude's training data reflects trust signals. Named authorship from credentialed experts is one of the clearest.

Brands in regulated industries showed measurably higher positive sentiment when their content carried expert bylines. The email security brand's safety/security content hit 98.3% positive sentiment. A leading fintech platform's safety/security theme was 81% positive.

What to do: Attach named experts with verifiable credentials to your highest-value content. Your CISO writes the security content. Your compliance lead writes the regulatory guides. Your engineering team publishes technical docs under their own names.

Implementation checklist for named authorship:

□ Add author bylines to every piece of content that deals with technical, legal, or security topics

□ Link each author name to a dedicated author page with credentials, LinkedIn URL, and publication history

□ Include the author's title and relevant certification in the byline itself (e.g., "Sarah Chen, CISSP, Head of Security")

□ Submit author profiles to Wikipedia, Crunchbase, and LinkedIn to create verifiable third-party identity

□ Have subject-matter experts (not content generalists) own the "expertise" pages in your content library

With utility content, third-party presence, clear framing, and expert authorship in place, you have the foundation. The next three levers are where you go on offense.

Lever 5: Competitor Comparison Content

This is the single highest-leverage content type for B2B SaaS brands in Claude SEO.

When a buyer asks "what are the alternatives to [leading tool]," AI platforms look for content that explicitly makes comparisons. If you have published that comparison, you control the framing.

One AI brand's mention rate jumped from 26% overall to 84% on competitor queries. A category-dominant brand we track went from 77% to 91%.

The most-cited pages for a mid-market e-signature platform tell the same story. Its "Best Alternatives" and comparison roundup pages earned citations across five or more of six platforms. Comparison content achieves the broadest cross-platform reach of any content type.

What to do: Build comparison pages for every major competitor. Publish "X vs Y" pages, "Best Alternatives to X" roundups, and feature-comparison tables. Be honest and accurate. Claude can detect one-sided framing, and it will hurt your sentiment scores.

Comparison pages work best when the reader can get the answer immediately. That is what the next lever ensures.

Competitor comparison pages are the highest-ROI content type we have identified for Claude SEO. If you do not have the internal bandwidth to build and maintain these pages, TripleDart’s content team can build your full comparison library and track citation performance across all six platforms.

Lever 6: Answer-First Writing

Claude synthesizes answers. It privileges content that leads with the answer, not content that buries it under 500 words of background.

A mid-market e-signature platform's most-cited page, a "10 Best Electronic Signature Software" roundup (52 citations), works because it leads with a ranked, definitive answer. An email security brand's "How to Read DMARC Reports" guide (66 citations) does the same: it answers the question in the opening lines, then provides depth.

What to do: Restructure your top-of-funnel content so the first paragraph directly answers the query. Move context and elaboration below. If someone asks "What's the best X for Y?" your page should name the answer in the first two sentences.

Query: "What is the best HIPAA-compliant e-signature tool?"

BEFORE (SEO-era default — buries the answer):

"Electronic signatures have transformed how healthcare organizations handle patient consent forms and administrative documents. In recent years, compliance requirements under HIPAA have made it essential for healthcare providers to choose tools that meet strict data handling standards. This guide explores the key factors you should consider when selecting an e-signature platform..."

Why it fails: Claude reads the first sentence, gets no answer, and moves to the next source in its retrieval set.

AFTER (GEO-optimized — answer in sentence one):

"The best HIPAA-compliant e-signature tools are [Your Brand], DocuSign, and Adobe Acrobat Sign — each offering BAA agreements, AES-256 encryption, and full audit trails. [Your Brand] is the only one with built-in FERPA compliance as well, making it the strongest choice for healthcare organizations that also handle student records."

Why it works: The answer is in the first sentence. Claude extracts it immediately. The differentiator (FERPA) is embedded in the answer, not buried in paragraph 8.

Answer-First Rewrite: Before and After

Answer-first pages earn individual citations. But to build the kind of authority that makes Claude default to your brand across dozens of queries, you need depth across your entire topic.

Lever 7: Topical Authority Through Content Depth

Isolated pages do not build citation momentum. Brands that build dense clusters around a topic earn outsized visibility.

One brand has nearly 1,000 unique URLs cited across AI platforms. Not because each page was individually optimized for AI, but because the collective depth signals authority. Its citations span the homepage, diagnostic tools, error-fix guides, comparison pages, and dozens of supporting articles.

Contrast that with a workflow automation brand that managed just 4% mention rate and 1.3% visibility. Its content footprint was too thin to establish authority.

The encouraging part: that brand improved 5x over 90 days, proving that deliberate content investment works even from a near-zero baseline.

What to do: Map your category's key topics and build content clusters around each one. Include tools, guides, comparisons, templates, and documentation. Brands with 500+ unique cited URLs consistently outperformed those with fewer than 200.

Suggested Cluster Map: B2B SaaS Topic Hubs

Build a hub-and-spoke cluster for each pillar. Each spoke page should link back to the pillar and cross-link to adjacent spokes. Claude cites clusters, not standalone pages.

AI Visibility Benchmarks: What We See Across Brands

We monitor AI visibility across multiple B2B categories through our Slate platform. Here is how mention rates, visibility, and sentiment distribute across some of the brands in our dataset.

Reading the Benchmark Data

Three patterns emerge from this data.

Note on data interpretation: The metrics above (mention rate, visibility, sentiment) are directly observed through our Slate monitoring platform. Patterns described as "more visible brands have deeper content footprints" are correlational, not causal. We do not control for brand age, marketing budget, or category competition in this cross-brand comparison.

First, mention rate and visibility are not the same thing. The logistics brand gets mentioned in 10.35% of queries but captures only 2.89% visibility because its average position is 5.63. The restaurant tech brand gets mentioned less often (8.5%) but achieves higher visibility (3.36%) because it averages position 4.1. Position drives visibility more than frequency.

Second, the gap between visible and invisible is not a gradient. It is a cliff. The email deliverability platform and the revenue management tool both sit below 0.1% mention rate. They are functionally nonexistent to AI. The jump from 0.03% to 2.09% to 8.5% represents entirely different strategic realities.

Third, sentiment varies dramatically by category. The accounting firm runs near-zero negative sentiment (0.9%). The restaurant tech brand absorbs 13% negative. The email platform takes 15%. Categories where pricing and product quality are actively debated generate more negative signal for AI to surface.

What to Do This Quarter: Visibility-Based Priority Plan

Benchmarking Your Claude SEO Performance

Citation share is the new share of voice.

If you have been reading this guide and wondering "where do I actually stand," this section gives you the benchmarks to find out. Here are the four metrics that matter and realistic targets for each.

The Visibility Staircase

Before diving into individual metrics, here is a quick way to assess your starting position.

Mention Rate

This is the percentage of relevant queries where your brand gets named at all. The median for B2B SaaS brands is around 9%. If you are below that, you have a structural gap: either your third-party mentions are too thin or your content footprint is too shallow.

The jump from mid-range to strong performer is where most of the strategic work happens. Getting from 8% to 20% is achievable with a focused content and third-party strategy over a quarter. Getting from 0% to 8% requires building a content foundation from scratch.

Visibility and Share of Voice

Visibility measures how prominently you appear, not just whether you are mentioned. Share of voice measures your slice of all brand mentions in your category.

The median visibility across B2B SaaS brands is just 3.16%. Top quartile starts above 8.5%. The median share of voice is 1.75%, with top quartile above 5.84%.

The category leader effect is steep. In some verticals, the gap between leader and challenger is nearly 19x. In others, it is as narrow as 1.16x. That variation matters. Your competitive gap determines your strategy. If you are within 2x, go broad. If you are at 5x or more, go narrow and own a niche first.

Query Coverage by Type

Not all queries deliver equal visibility. The difference between broad category queries and competitor comparison queries is dramatic.

For one brand we tracked, mention rate jumped from 26% on broad queries to 84% on competitor queries. That is a 3.2x boost. An enterprise brand saw a similar pattern, going from 77% overall to 91% on comparison queries.

Aim for: 60%+ mention rate on competitor-comparison queries and 15% to 25% on broad category queries. If your competitor-query mention rate is below 40%, your comparison content needs work.

Sentiment Quality

Volume without quality is a liability. The average positive sentiment across B2B SaaS brands is roughly 65%. The range runs from 50% to 77%.

Target: 65%+ overall positive sentiment. Flag any theme where negative sentiment exceeds 30% for immediate attention. Pay special attention to pricing and support themes. Lean into safety, security, and compliance topics where positive sentiment runs above 95%.

Claude SEO for B2B SaaS: Three Query Types That Matter

B2B SaaS buyers have specific query patterns. They are not equally valuable, and optimizing for the wrong ones wastes effort.

Category Queries

Queries like "best payment gateway" or "top HR tech tools" are the most common but deliver the lowest per-query visibility. The reason: they spread mentions across many competitors. Every brand gets a small slice.

What to do: Category content is table stakes. You need it for baseline presence. But do not expect it to drive your visibility numbers.

Recommended page types for category queries: pillar overview page ("best [category] tools"), category comparison table, and "what is [category]" explainer. Structure each with a ranked list in the first 150 words.

Comparison Queries

This is where Claude SEO delivers outsized value. "X vs Y" and "alternatives to X" queries are mid-funnel, high-intent, and dramatically more visible.

The numbers we see are consistent: mention rates jump 2x to 3x on comparison queries versus broad category queries. These are the queries where AI platforms look for content that explicitly makes comparisons. If you have published that comparison, you control the framing.

What to do: Build comparison pages for every major competitor. Be fair and accurate, but make sure your brand is part of the narrative.

Recommended page types for comparison queries: "[Your Brand] vs [Competitor]" direct comparison, "[Competitor] alternatives" roundup (where you are listed), and "[Your Brand] pricing vs [Competitor]" page. Each should have a structured comparison table and a clear verdict in the opening paragraph.

Process and Technical Queries

Queries like "how to set up DMARC" or "how to implement HIPAA-compliant e-signatures" are bottom-funnel and generate the most citations per page.

For example, one fintech brand's error-fix guide earned 77 citations from a single page. These pages answer specific, high-intent questions definitively. They may rank for small search volumes on Google, but they punch far above their weight in AI responses.

What to do: Identify the 10 to 15 most common technical processes in your category and create definitive, step-by-step guides. Include free tools where possible: checkers, calculators, compliance checklists.

Recommended page types for process queries: step-by-step how-to guides (numbered, with screenshots), error-fix pages ("[Error]: causes and fixes"), and setup documentation. Use structured data (HowTo schema where applicable). One well-built process page can earn 50–100+ citations from a single URL.

Platform-by-Platform Strategy

Not every AI platform treats your brand the same. Here is how to think about each one.

Where Each Platform Excels

- Google AI Mode delivers the highest raw visibility for many brands. It favors established players and tends to surface brands with strong Google organic presence.

- Claude has the lowest "not mentioned" rate (54%) and the highest owned-domain citation rate (9.1%). It is the best platform for B2B brands that want direct traffic to their site.

- Perplexity generates 4.6x more citations than ChatGPT and has a competitor domain citation rate of 17.6%. Great for comparison queries, but be aware your competitors show up more here too.

- Gemini consistently delivers mid-to-high visibility. It is a reliable platform that rarely surprises in either direction.

- Google AI Overview favors established brands with strong domain authority. Smaller brands often see lower numbers here.

- ChatGPT has the lowest brand visibility across the board. Do not calibrate your strategy to it just because it has the most users.

Cross-Platform Citation Patterns

Claude's owned-domain citation rate (9.1%) being double ChatGPT's (4.5%) is one of the most actionable findings in our data. It means Claude sends more traffic to your website, not to aggregators.

The practical takeaway: optimize for Claude first (highest quality citations), monitor Perplexity (highest citation volume), and do not ignore Google AI Mode (highest raw visibility for many brands).

Brand Lessons From Our Data

Here are four patterns from our dataset, each illustrating a distinct strategic lesson. If you recognize your brand in one of these patterns, the corresponding strategy applies directly.

Pattern 1: The Category Leader

One enterprise client represents what dominant AI visibility looks like. Over 77% mention rate. Nearly 54% visibility. Average position of 2.21.

What makes it work:

- Massive content surface area (over 5,000 unique cited URLs)

- Utility-first content (a pricing page alone pulled over 2,600 citations)

- Cross-platform consistency (mentioned on every platform we tracked)

Where it is vulnerable: Customer support sentiment (38.9% negative across more than 6,000 mentions on that theme) and pricing sentiment (18.8% negative across more than 41,000 mentions). These are real liabilities that AI models surface in their answers. Even category leaders have blind spots.

What this means for your brand: If you are the category leader, your biggest risk is not losing visibility — it is losing sentiment. Monitor customer support mentions monthly. One wave of negative reviews during a product incident can permanently shift how Claude frames you. The content moat is less durable than the sentiment moat.

Pattern 2: The Niche Authority

An email security brand shows what happens when you go deep on a focused topic. Its overall mention rate is 26%, but on competitor queries it jumps to 84%. Nearly 1,000 unique URLs are cited across AI platforms.

The strategy: Cover every corner of email authentication. Every major topic has a dedicated page, from DMARC setup to SPF flattening to specific error codes. The result is topical authority that AI models recognize.

The challenge: Visibility dropped as the query set broadened. This is a dilution effect, not a performance decline. The brand dominates its core niche but struggles on tangential queries. For niche players, that is fine. Own your corner first.

What this means for your brand: Deep niche authority is a legitimate path to high mention rates without competing on category breadth. If you are a focused product, own your topic completely before you try to expand. Claude rewards depth before breadth.

Pattern 3: The Challenger Brand

A mid-market e-signature platform illustrates the challenge of competing against a category giant. Its overall mention rate is around 5% while the category leader holds over 33% visibility. That is roughly a 19x gap.

But look closer. In the compliance niche, this brand's mention rate jumps to nearly 35% with 17% visibility. Its HIPAA e-signature page earns citations across five platforms. Its "Best Alternatives" page pulls citations across five platforms.

The lesson for challengers: Do not fight the category leader on broad queries. Find the niche where your framing is sharpest and own it completely. For this brand, that is compliance-focused e-signatures.

What this means for your brand: Your fastest path to meaningful visibility is not more content — it is more specific content. Find the query type where your framing is naturally strongest and dominate that first. Broad category visibility follows niche authority, not the other way around.

The warning: This brand's visibility peaked at 13.6% in late January and collapsed to 0.64% by late March. Without sustained content investment, early gains evaporate fast.

Pattern 4: The Near-Parity Opportunity

This is the most encouraging case for smaller brands. A logistics SaaS platform in our dataset holds a 10.3% mention rate against a category leader at just 12%. That is a gap of only 1.16x.

A mid-market restaurant tech provider we track shows a similar dynamic, sitting at 8.5% mention rate in a category where the leader has not pulled far ahead.

This is near-parity. With focused content strategy and a modest push, these brands could flip the standings.

Not every category has an insurmountable leader. If you are in a space where the top player has not invested heavily in AI visibility, that is your window. It will not stay open forever.

What this means for your brand: Before you benchmark against the global category leader, benchmark against the leader in your specific market segment. The gap may be far smaller — and far more winnable — than the headline numbers suggest.

Quarterly AI Visibility Check-In Framework

AI platform behavior, citation patterns, and mention rates change meaningfully quarter over quarter. Use this framework to stay current.

Week 1: Full mention-rate audit. Run your complete query library across all six platforms. Compare mention rates, visibility scores, and share of voice against the previous quarter. Flag any brand that has gained or lost more than 5 percentage points.

Week 2: Content refresh cycle. Update your comparison pages whenever a competitor changes pricing, launches a new feature, or rebrands. Refresh your utility content (tool pages, calculators, compliance explainers) with current data and dates. AI models favor content that signals freshness through updated statistics and timestamps.

Week 3: Sentiment dashboard review. Check your sentiment scores by theme. Pay special attention to pricing, customer support, and ease-of-use themes. If negative sentiment on any theme has exceeded 30%, investigate the source and develop a response strategy. Publish content that directly addresses the negative narrative with data or customer evidence.

Week 4: Competitive intelligence pass. Review what your top three competitors have published since your last audit. Check whether new competitor content is earning AI citations that displace yours. Look for emerging platforms or AI model updates (new Claude versions, Perplexity algorithm changes, Google AI Mode rollouts) that could shift visibility by 10 to 20 percentage points within a single quarter.

Reacting to AI Platform Algorithm Updates

AI tools update their models and retrieval mechanisms frequently. When a new Claude version ships, or when Google rolls out changes to AI Mode, your visibility can shift overnight. Here is how to respond.

First, re-run your core query set within 48 hours of any announced platform update. Compare the results against your baseline. If your mention rate drops by more than 3 percentage points on any platform, investigate whether the change affects your content format (answer-first structure, schema markup) or your source authority (third-party citation patterns).

Second, check whether the update has changed how the platform handles citations. Some model updates shift the balance between training-data knowledge and real-time retrieval. If you see a sudden increase in citations from third-party sources and a drop in owned-domain citations, it may signal that the platform is weighting retrieval more heavily, which means your owned utility content needs to be even more discoverable.

Third, monitor competitor visibility shifts alongside your own. If a competitor’s mention rate jumps while yours holds steady, the platform update may have introduced a new signal that favors their content structure. Study what changed in their approach and adapt accordingly.

Ready to Audit Your Claude SEO?

If you started reading this guide after typing your category into Claude and seeing nothing, you now have the framework: the three filters Claude applies, the seven levers you can pull, the benchmarks to measure against, and the patterns that show what works.

The gap between knowing this and doing it is execution. Content architecture. Third-party campaigns. Citation monitoring across six platforms. Sentiment analysis by theme. Ongoing optimization as models update.

TripleDart has built citation monitoring infrastructure, content architectures, and third-party mention strategies across dozens of B2B SaaS categories. We have tracked millions AI responses across platforms and helped brands move from single-digit mention rates to category-competitive visibility.

Book a meeting with our team to audit your brand's AI visibility and move forward with a Claude visibility strategy.

Keep This Guide Current

Quarterly Review Reminder: AI platform behavior, citation patterns, and mention rates change meaningfully quarter over quarter. We recommend: (1) running a full mention-rate audit every 90 days, (2) refreshing your comparison pages whenever a competitor changes pricing or features, (3) checking your sentiment dashboard monthly for emerging negative themes. Platform changes (new Claude versions, Perplexity algorithm updates) can shift your visibility by 10–20 percentage points within a single quarter.

Frequently Asked Questions

Is Claude SEO the same as AIO or GEO?

Claude SEO is a subset of the broader AI Optimization (AIO) and Generative Engine Optimization (GEO) discipline. The distinction matters because platforms behave very differently. A brand that is visible on Claude may be invisible on ChatGPT, and vice versa. Platform-specific optimization is necessary.

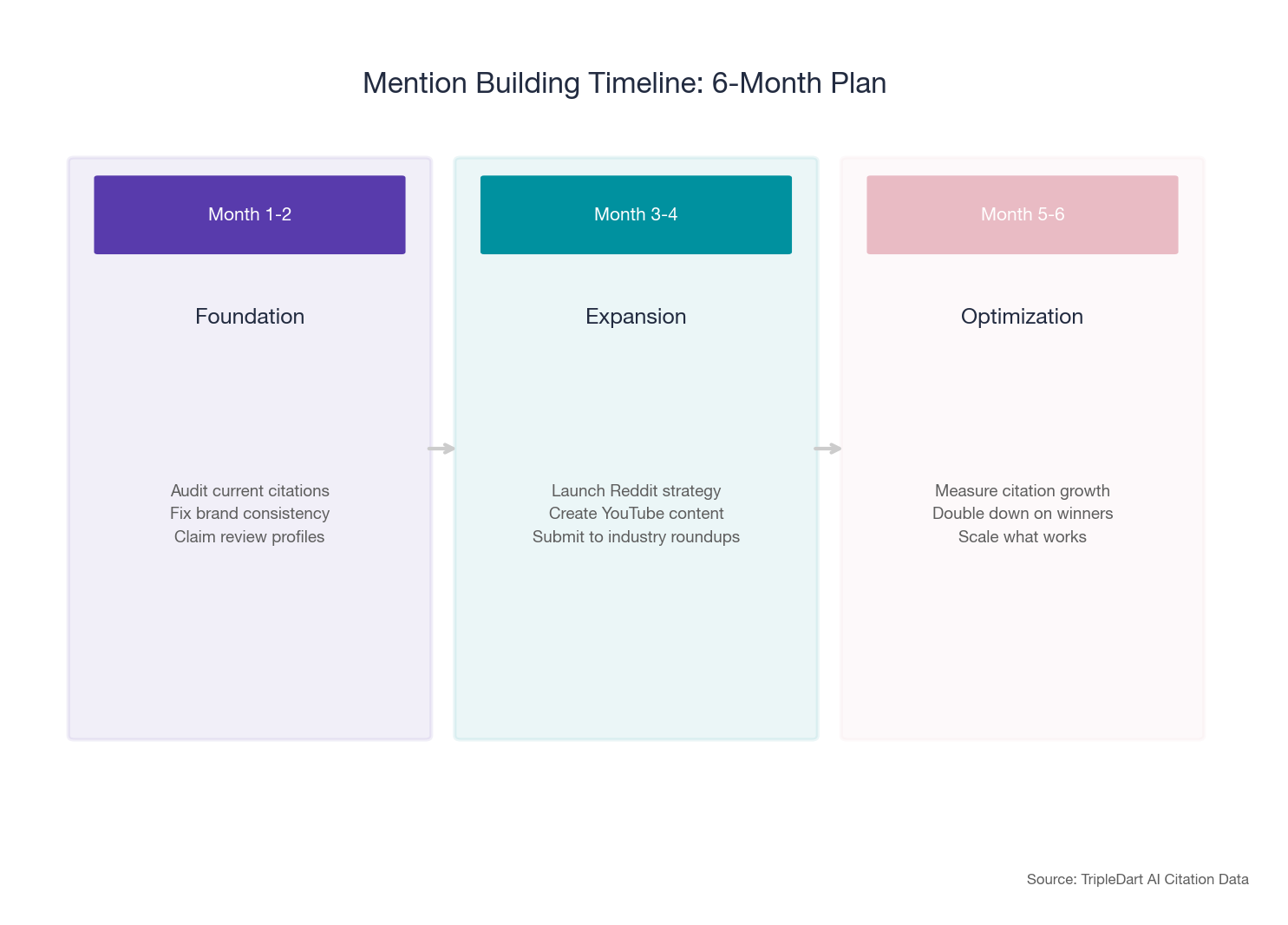

How long does it take to see results?

Both rapid gains and rapid losses are possible. Expect initial signal within 4 to 6 weeks of a focused content push. Plan for 90+ days to build the topical authority that produces durable visibility.

Can small brands compete with category leaders?

Yes, but not on broad category queries. The leader advantage is enormous in head-to-head visibility comparisons. Gaps of 5x to 19x are common on broad queries. Small brands win by owning a niche: compliance, a specific integration, a technical workflow. The near-parity pattern shows that in categories where the leader has not invested, the gap can be as narrow as 1.16x.

Does Claude use real-time content or only training data?

Both. Claude draws from its training corpus (reflecting content published before its cutoff) and from real-time web retrieval. In our data, both types of sources appear. Homepages and long-established content are training-data assets. Comparison pages and timely guides are likely retrieved in real time. You need a deep backlog of established, well-linked content and a steady stream of fresh, topically relevant pages.

Which platform should I prioritize?

Start with Claude if you are a B2B SaaS company. It delivers the highest owned-domain citation rate (9.1%), the lowest "not mentioned" rate (54%), and the strongest B2B brand visibility overall. Then monitor Perplexity for volume and Google AI Mode for raw reach.

Does Claude SEO require a separate content strategy from Google SEO?

Not entirely. The foundational work carries over. What changes is the format and framing: AI models reward answer-first writing and named expertise, while Google still weighs click-through signals and page experience. The biggest shift is the importance of third-party mentions. In traditional SEO, backlinks dominate. In AI visibility, brand mentions correlate 0.664 with visibility versus just 0.218 for backlinks, per an Ahrefs study of 75,000 brands.

What tools can I use to monitor my Claude SEO performance?

Several categories of tools now exist for tracking AI visibility. Dedicated AI citation trackers like Slate, Otterly.ai, and Semrush’s AI Visibility toolkit monitor brand mentions across multiple AI platforms. For a lower-cost starting point, you can write scripts that send consistent prompts to the Anthropic, OpenAI, and Perplexity APIs and parse responses for brand mentions. Perplexity’s Sonar API is especially useful because citation tokens are free. The key is consistency: track the same query set monthly and compare trends over time. No single tool covers every platform perfectly, so most serious teams combine a dedicated tracker with brand-mention monitoring tools like SparkToro or Brand24.

Should I worry about newer AI platforms beyond the six covered here?

Yes, but proportionally. The AI discovery landscape is expanding. DeepSeek, Microsoft Copilot, and other emerging platforms are gaining traction, particularly in enterprise and developer contexts. Copilot in particular has shown rapid growth as it embeds directly into workplace tools like Microsoft 365. The core optimization principles in this guide (utility content, third-party mentions, consistent brand framing, answer-first structure) transfer across platforms. What changes is the weighting: each platform has a different balance of training data versus real-time retrieval, and some platforms weight review sites or GitHub presence more heavily than others. We recommend checking emerging platforms quarterly. If a new platform reaches meaningful adoption in your buyer’s workflow, add it to your monitoring set.

How important is schema markup for Claude SEO?

Schema markup is a supporting signal, not a primary driver. In our data, the brands with the highest mention rates all had well-structured schema (Organization, Product, FAQ, HowTo) on their key pages. But schema alone did not move the needle for brands that lacked the fundamentals: utility content, third-party mentions, and topical depth. Think of schema as making it easier for AI platforms to extract and verify information from your pages. If your content already answers the query well and your brand has strong third-party authority, schema helps AI models confirm that your content is trustworthy. The Princeton GEO study found that structured, verifiable content boosted AI visibility by up to 40%. Schema markup is one way to make your content more verifiable.

What should I do if Claude is saying something negative about my brand?

Address the source, not the symptom. Claude reflects what its training data and retrieval sources say about your brand. If negative sentiment is appearing, it is because negative content exists in the places Claude draws from: review sites, Reddit threads, support forums, or industry publications. Start by auditing your sentiment dashboard to identify which themes are driving negative mentions. Pricing and customer support are the two most common problem areas in our data. Then work upstream: improve the actual experience where possible, respond to negative reviews on G2 and other platforms, publish transparent content that addresses the concern directly (a public pricing page, a support SLA commitment), and build positive third-party coverage that outweighs the negative signal over time. AI sentiment shifts gradually, but it does shift when the underlying source material changes.

.png)

.webp)

.webp)

.png)

.png)

.webp)

.webp)

.webp)

%20(1).png)

.webp)

.webp)

.webp)

%20Ads%20for%20SaaS%202026_%20Types%2C%20Strategies%20%26%20Best%20Practices%20(1).webp)

.png)

.png)

.webp)

![Creating an Enterprise SaaS Marketing Strategy [Based on Industry Insights and Trends in 2026]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6965f37b67d3956f981e65fe_66a22273de11b68303bdd3c7_Creating%2520an%2520Enterprise%2520SaaS%2520Marketing%2520Strategy%2520%255BBased%2520on%2520Industry%2520Insights%2520and%2520Trends%2520in%25202023%255D.png)

.webp)

%20Agencies%20for%20B2B%20SaaS%20Compared%20(2026).webp)

.webp)

%20with%20Hubspot.webp)

.png)

.png)

.png)

.png)

.png)

.png)

.webp)

.webp)

.png)

.png)

.webp)

.png)

.webp)

![How to Measure AEO Success: 12 Metrics Beyond Clicks [2026 Framework]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6a0d664b326187e99b3d5960_6%20-%20The%20Ultimate%20Guide%20to%20Measuring%20AEO%20Success%20in%202026.png)

![7-Step Workflow for AEO-Ready Content [2026 Framework]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6a0d55ea88913ede1d3a7123_5%20-%20Workflows%20for%20Optimized%20AEO-Ready%20Content%20Creation.png)

.png)

![How to Structure Content for AEO and GEO [With Templates]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6a0c6a56eb700472e635ff33_1%20-%20How%20to%20Structure%20%20Content%20for%20AEO%20and%20GEO%20%20Summaries%20(2026).png)

.png)

.png)

.png)

.png)

%2520Agencies%2520(2025).png)

![Top 9 AI SEO Content Generators for 2026 [Ranked & Reviewed]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6858e2c2d1f91a0c0a48811a_ai%20seo%20content%20generator.webp)

.webp)

.webp)

.webp)